Machine-learning-based adaptive optics maintain doughnut-shaped beams in scattering media

Using adaptive optics (AO) to compensate for the degradation of doughnut-shaped beams applied to stimulated emission-depletion (STED) microscopy, particle trapping, and other applications is not new. Recent methods include the use of deformable mirrors, microelectromechanical systems (MEMS), and spatial light modulators (SLMs) to compensate for system- and scattering-media-based aberrations.

Furthermore, the use of machine-learning- or deep-learning-based algorithms using neural networks has also been applied to improving focus of light beams in scattering media. However, the algorithm information-processing speeds were relatively slow and accuracy was limited by the small number of AO optical elements used.

Now, researchers from Zhejiang University (Hangzhou, China) have combined AO with a very large number of optical elements and a convolutional neural network (CNN) to maintain the focus of doughnut-shaped beams in scattering media with high accuracy and speed.1

Two-orders-of-magnitude more AO

In the experiment, the researchers first generate the doughnut-shaped beam by expanding and collimating the light from a 637 nm continuous-wave (CW) laser, passing it through a 5.4-mm-diameter aperture, using a half-wave plate to generate horizontally polarized light, splitting the light with a beamsplitter, and sending half the light through a SLM to convert it to a vortex laser beam that is then focused and converted into a doughnut-shaped intensity point-spread function (IPSF). The IPSF is then magnified and imaged onto a 0.3 Mpixel CMOS sensor.

To simulate a scattering medium, a series of random phase masks are inserted into the setup. The SLM used has 512 × 512 pixels total, but the effective number of pixels used for AO correction are in a 360 × 360 pixel circle. That is, the AO setup uses 360 × 360 × π/4 or a total of 101,784 optical elements—at least two orders of magnitude more than the number used in conventional AO applications.

Once a distorted doughnut-shaped beam is imaged onto the CMOS sensor, the CNN is applied to the image set to perform 2D image correction. The CNN has five convolution layers (32 kernels of size 5 × 5 in the first two and 64 kernels of size 3 × 3 in the last three) and three fully connected layers (512 neurons in the first two and 12 neurons in the last layer). The CNN is trained to extract features from the input image and is adequate for 2D image processing.

Phase aberrations are characterized by 15 Zernike modes. The first three modes (piston phase, tip, and tilt) are neglected because they do not contribute to the IPSF, so only the last 12 modes are predicted by the CNN model.

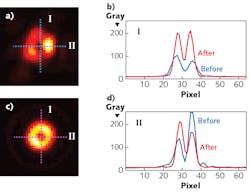

In a series of experiments using 200 different configurations of the random phase masks to corrupt the doughnut-shaped beam, application of the CNN algorithm to adjust the AO elements achieved an average 97.5% correction accuracy with just one iteration in an average of 17 ms when using a standard personal computer (see figure). Faster processors could further increase the speed by at least one order of magnitude.

REFERENCE

1. Y. Zhang et al., Opt. Express, 27, 12, 16871–16881 (2019).

About the Author

Gail Overton

Senior Editor (2004-2020)

Gail has more than 30 years of engineering, marketing, product management, and editorial experience in the photonics and optical communications industry. Before joining the staff at Laser Focus World in 2004, she held many product management and product marketing roles in the fiber-optics industry, most notably at Hughes (El Segundo, CA), GTE Labs (Waltham, MA), Corning (Corning, NY), Photon Kinetics (Beaverton, OR), and Newport Corporation (Irvine, CA). During her marketing career, Gail published articles in WDM Solutions and Sensors magazine and traveled internationally to conduct product and sales training. Gail received her BS degree in physics, with an emphasis in optics, from San Diego State University in San Diego, CA in May 1986.