First conceptualized by Massachusetts Institute of Technology (MIT; Cambridge, MA) researchers and reported on by Laser Focus World back in late 2010, an ultrafast camera that could potentially see around corners has now been experimentally demonstrated by members of the original research team at the MIT Media Lab.1 By using time-of-flight techniques and computational algorithms to decode diffuse reflections from a 3D object concealed around a corner, the streak-camera-based setup—similar to that used by the MIT Media Lab to create trillion-frame-per-second visualizations—is able to reconstruct the shape of the object.

The diffuser wall

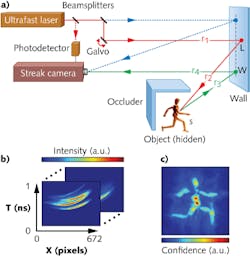

An important part of the experimental setup for around-the-corner object reconstruction is a 40-cm-high, 25-cm-wide diffuser wall. An ultrafast laser and a streak camera are both directed at the wall, and an object is placed near the wall but hidden around the corner—beyond the direct line-of-sight of the camera—by an opaque divider (see figure). An attenuated portion of the laser beam is also reflected by the diffuser wall directly into the streak camera.

The laser is directed via a galvanometric scanner to points on the wall above and below the field of view of the camera. The laser emits 50-fs-long pulses and the camera digitizes information in 2 ps time intervals, recording streak images with one spatial and one temporal dimension.

Reconstruction

By scanning the laser and recording successive streak images, triangulation and reconstruction algorithms are used to decode the diffuse reflections into a 3D set of data that can be used to reconstruct the physical shape of the object. The reconstruction algorithm uses the known time of flight to calculate light path length and performs triangulations from all laser positions and all pixels on the wall observed by the camera.

Each pixel in the streak image corresponds to a finite area on the diffuser wall and a 2 ps time interval, creating a discrete space-time bin. But the effective time resolution of the system is 15 ps due to the finite temporal point-spread function of the camera. In a process called backprojection, the streak images and time-of-flight data are used to calculate the probable physical locations of points from the object in the image plane, allowing creation of a 3D depth map for the concealed object.

Data from the 3D range setup are used to compute a final image and submillimeter-precision depth map with centimeter-scale lateral precision for a concealed object in a 40 × 40 × 40 cm3 volume. “The ability to look around the corner with its many applications is only one example of what is possible in this new research area between computational imaging and ultrafast optics,” says Andreas Velten, a member of the research team at MIT. “We hope to inspire more research projects in this field.”

“Going from an x-ray machine to a CAT-scan machine required a clever co-design of hardware and computation via tomographic reconstruction; similarly, going from a traditional hardware such as time-of-flight radar to approaches like CORNAR [see http://cameraculture.info] to look around corners requires a new generation of mathematical tools,” says Ramesh Raskar, MIT Media Lab associate professor. “Our Nature Communications paper with collaborators and my own earlier white papers indicate some of the future directions of such a joint hardware-software approach. Initially, the technique will be targeted at controlled settings for scientific, medical, and industrial imaging. But over time, as ultrafast imaging improves, more portable and lower-power form factors should enable a range of indoor and outdoor solutions from robotics, vehicles, factory automation, first responders, and computational photography.”

REFERENCE

1. A. Velten et al., Nat. Comm., 3, 745, 1–8 (Mar. 20, 2012).

About the Author

Gail Overton

Senior Editor (2004-2020)

Gail has more than 30 years of engineering, marketing, product management, and editorial experience in the photonics and optical communications industry. Before joining the staff at Laser Focus World in 2004, she held many product management and product marketing roles in the fiber-optics industry, most notably at Hughes (El Segundo, CA), GTE Labs (Waltham, MA), Corning (Corning, NY), Photon Kinetics (Beaverton, OR), and Newport Corporation (Irvine, CA). During her marketing career, Gail published articles in WDM Solutions and Sensors magazine and traveled internationally to conduct product and sales training. Gail received her BS degree in physics, with an emphasis in optics, from San Diego State University in San Diego, CA in May 1986.