Fiber-optic Communications: Fiber bandwidth pushes closer to nonlinear Shannon limit

At the 2019 Optical Fiber Communications Conference (OFC; held March 3-7 in San Diego, CA), bandwidth was the hottest topic, but it was also evident that customers are pushing for greater network flexibility to meet their changing needs. Traditional service providers are adding new services and testing 5G, cable companies are bringing digital fiber links closer to their end users, and cloud and content networks want seamless performance for datacenters occupying multiple buildings. All of them want more automated and adaptable software-defined networks, says Helen Xenos of telecom provider Ciena (Hanover, MD).

A big change on the bandwidth frontier is the growing interest in upgrading datacenter links from 100 Gbit/s to the new 400 Gbit rate introduced in 2017. More than 10 million 400 Gbit ports should be shipped over the next three years, says Jimmy Yu, a vice president at the Dell’Oro Group (Redwood City, CA) market research firm.

The trend toward higher-capacity and more-flexible systems was evident on the show floor, where the Optical Internetworking Forum, a nonprofit consortium promoting interoperable computer networking, ran interoperability demonstrations for both 400 Gbit and Flexible Ethernet. The consortium’s 400 Gbit ZR standard is an inexpensive version of 400 Gbit Ethernet designed for datacenter links extending up to 100 km and wavelength-division multiplexing (WDM). It also can run the high-order 16QAM modulation code.

Most first-generation 400 Gbit systems are limited to spans of 100 km, suitable for links between datacenters in the same urban area. Their data rates typically drop to 200 Gbit/s at metro distances of a few hundred kilometers and to 100 Gbit/s at longer distances. At OFC, both Ciena and Infinera (Sunnyvale, CA) announced plans for a new generation of 800 Gbit systems able to carry two 400 Gbit signals between datacenters.

Market needs are diverging, says Xenos, so Ciena will introduce two versions of its new Wavelogic5 system. The Extreme model focuses on high-bandwidth needs, transporting 800 Gbit to 100 to 200 km and 600 Gbit for up to 1000 km. The Extreme can carry 400 Gbit over transpacific distances when users need long-haul transport. Operating at 800 Gbit requires using a cutting-edge digital signal processor fabricated with 7 nm features that transmits 95 gigasymbols per second (a rate of about 95 GHz), packing more signals into each symbol, and using high-bandwidth electro-optics. Plans call for deliveries of that system to start by year-end. A smaller Nano version with data rates of 100 to 400 Gbit also is planned to fit into smaller places at lower cost, primarily datacenters.

Infinera announced its own 800 Gbit system, the Infinite Capacity Engine (ICE)6, for delivery in the second half of 2020. That will upgrade the current 400 Gbit ICE4 by dynamically adjusting the number of bits per modulation symbol and improving its use of subcarriers to split the signal into multiple carriers using lower baud rates.

Record spectral efficiency

At the technical sessions, engineers from Infinera and Facebook reported setting a record spectral efficiency of 6.21 bits per second per hertz of bandwidth for long-haul data efficiency in tests on the 6605 km MAREA cable between Virginia and Spain. As installed, MAREA transmitted 20 Tbit/s on each of eight fiber pairs. The team led by Stephen Grubb of Facebook and Pierre Mertz of Infinera set aside one fiber for tests using the 16QAM (quadrature amplitude modulation) code on a superchannel with eight evenly spaced laser sources. By setting four binary bits during each symbol interval, 16QAM can select binary numbers from 0000 to 1111.

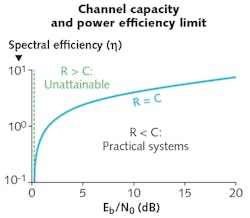

The test was the first to successfully use 16QAM over transatlantic distances, says Geoff Bennett of Infinera. They only used a few wavelengths at once, but they filled the extra channels with noise to model their behavior when carrying error-free signals, and from that estimated that a fiber filled with superchannels could transmit 26.2 Tbit/s of data. At that level, he wrote in a blog “we are approaching the nonlinear Shannon limit [see figure] in long-haul and subsea cable systems, so each innovation in coherent processing or optical components is focused on pulling out incremental fractions of a decibel in transmission budget to increase capacity-reach over a given fiber pair.”

Big data companies are facing up to that limit on individual fiber capacity. “The need for parallelism is now established,” said Herve Fevrier of Facebook at OFC. Increasing fiber count may help in the near-term, but submarine cables would require design to handle more than about 25 fiber pairs. He says the “natural evolution” would be to space-division multiplexing in multicore or multimode fibers, but warns that would require improvements in power efficiency and optical amplification.

About the Author

Jeff Hecht

Contributing Editor

Jeff Hecht is a regular contributing editor to Laser Focus World and has been covering the laser industry for 35 years. A prolific book author, Jeff's published works include “Understanding Fiber Optics,” “Understanding Lasers,” “The Laser Guidebook,” and “Beam Weapons: The Next Arms Race.” He also has written books on the histories of lasers and fiber optics, including “City of Light: The Story of Fiber Optics,” and “Beam: The Race to Make the Laser.” Find out more at jeffhecht.com.