Computational Imaging: Computational imaging and the AC grid manipulates artificially lit scenes

Researchers at Technion – Israel Institute of Technology (Haifa, Israel) and the University of Toronto (Toronto, ON, Canada) are using the subtle phase and frequency cues from the myriad light sources powered by our alternating-current (AC) electrical grid not only to understand power distribution and different light-source characteristics, but to perform nocturnal, high-dynamic-range, low-light imaging of artificially lit scenes.1

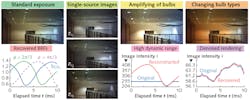

Different light sources, including ordinary bulbs, neon, LEDs, and fluorescent lights, all have a unique flicker signature with changes in intensity and spectral power distribution because of the grid's AC, so this information—often discarded for causing unnatural-looking colors in photos and temporal aliasing in videos—can be used to develop a time-varying model and, through subsequent coded-exposure computational imaging, reveal details about a nighttime scene that would not be obvious to the ordinary observer. Furthermore, this AC-enabled camera, or "ACam," can be used internationally under 110 or 220 V standard lighting conditions.

The ACam prototype

The 50 or 60 Hz nominal AC frequency of a light source creates a spatially varying, quasiperiodic signal in the image: shadows and specularities dim or brighten, according to the AC phase and the type of each light source. To adequately capture the hidden information in an AC-lit scene, the ACam camera uses a coded-exposure imaging technique that acquires high-dynamic-range (HDR) images that correspond to fractions of the AC cycle by capturing long exposures that synchronize with the AC signal by masking and unmasking pixels at 2.7 kHz.

Using a series of equations that take into account the illuminance, frequency, flicker, color, and other attributes of various artificial sources, the researchers created a database of electric lights called DELIGHT that looks at a variety of light sources (including high-pressure sodium, metal halide, mercury, fluorescent, and LED varieties) and quantifies a bulb response function (BRF). Using the illumination data from a lit scene, the various BRFs can be separated and show how the scene would appear under each type of bulb or in combination, or even be enhanced for improved nighttime or low-light-level imaging (see figure).

By keeping its shutter open for hundreds of AC cycles and optically blocking its sensor at all times except during the same brief interval in each cycle, ACam is able to overcome issues with low light levels, ultrabright lights, and noisy scenes. A digital micromirror device (DMD) performs the high-speed pixel masking implemented in the off-the-shelf camera, modified for passive AC-modulated imaging.

The current ACam prototype is built around a Texas Instruments (Dallas, TX) DLP LightCrafter 3000 DMD and an Allied Vision (Exton, PA) Prosilica Gt 2.8 Mpixel camera. The system acquires about 30 temporal samples of the flicker cycle, integrating them anywhere from a single cycle (10 ms) to 30 s. The coded aperture selectively exposes pixels to different numbers of cycles, according to their brightness. This enhances the dynamic range of the system by a factor of 48, relative to the native dynamic range of the Prosilica camera. "Better off-the-shelf DMDs exist. They can yield much denser spatiotemporal sampling of the flicker," says Technion lead researcher Mark Sheinin.

"The work has multidisciplinary significance," says Technion professor Yoav Schechner. "Passive imaging can help detect and diagnose electrical grid disturbances and loads in wide areas. This is a primary application. Moreover, our approach digitally calculates how the scene would appear if subsets of its light sources were turned off. Hence, 3D computer-vision methods that have used controlled lighting (photometric stereo, shape from shadows, for example) would work 'in the wild,' exploiting uncontrolled flickering lights that just happen to be around."

REFERENCE

1. See https://goo.gl/jDgDMx.

About the Author

Gail Overton

Senior Editor (2004-2020)

Gail has more than 30 years of engineering, marketing, product management, and editorial experience in the photonics and optical communications industry. Before joining the staff at Laser Focus World in 2004, she held many product management and product marketing roles in the fiber-optics industry, most notably at Hughes (El Segundo, CA), GTE Labs (Waltham, MA), Corning (Corning, NY), Photon Kinetics (Beaverton, OR), and Newport Corporation (Irvine, CA). During her marketing career, Gail published articles in WDM Solutions and Sensors magazine and traveled internationally to conduct product and sales training. Gail received her BS degree in physics, with an emphasis in optics, from San Diego State University in San Diego, CA in May 1986.