LASER-SPECKLE IMAGING: Simple optical setup detects speech remotely

Some technically savvy people know that light can be used as a tool for eavesdropping: if a beam from one arm of an optical interferometer is reflected off a window, the interferometer can sense sounds—including human voices—that make the window vibrate. But not only is it hard to separate voices from other sounds sensed by the interferometer; the setup must also be very precise, and in many cases there is no window conveniently nearby.

Researchers from Bar-Ilan University (Ramat-Gan, Israel) and the Universitat de València (Burjassot, Spain) have developed a different way to sense sound remotely—one that doesn't rely on either an interferometer or a window. Instead, a single laser beam is shone on the object to be monitored (for example, a human or a cellphone) and the speckles that appear in an out-of-focus image of the object are tracked, producing information from which a spectrogram or temporal sound signal can be constructed.1

Speckle-pattern translation

The setup is simple and versatile. A laser illuminates a small area on the object, which can be tens of meters away, and an ordinary digital camera captures the scene. The camera lens is defocused, producing a speckle pattern that does not randomly change when the object is tilted during its movement; in other words, the arrangement of random spots in the speckle pattern stays approximately the same (this is a phenomenon familiar to many who work in laser labs). The camera image is processed by applying a correlation that calculates the shift of the speckle pattern from frame to frame. The sound emitted by the object is simply the variation in the position of the correlation peak.

A CCD camera was used; the illumination source for most of the tests was a frequency-doubled Nd:YAG laser emitting at 532 nm. After first lab-testing the setup on a loudspeaker and on a person's throat to pick up vocalizations, the researchers moved on to full outdoors testing. All tests were performed at midday in summer in the midst of strong air turbulence and with no post-processing for noise removal.

In a typical setup for remote measurement, the camera was combined with a zoom lens (300 mm maximum focal length) or a telescope. A 64 × 64-pixel region of interest was imaged at a rate of 2480 frames/s.

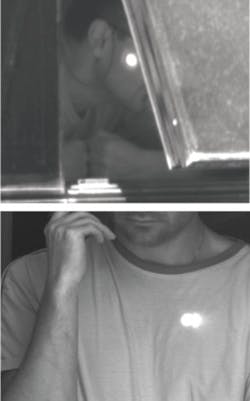

A cell phone was monitored from 60 m away and the audio signal reconstructed (the Optics Express paper contains audio files of some of the resulting sound tracks). Next, a spot on the back of a subject's head was monitored from 30 m away and the subject's speech captured. Another subject's words were captured from 100 m away across a noisy construction site by measuring a spot on the person's face (see figure).

Optical cardiogram

In a different sort of measurement, which produces a signal the researchers call an optical cardiogram, the side of a subject's neck was illuminated from 60 m away, allowing the subject's heartbeat to be measured. Such information can be used to measure the physical stress of the subject, and can also be used to distinguish one individual from another, as the heartbeat of each subject has its own characteristics (the latter two tests were successfully carried out by measuring a spot on a subject's wrist from about 1 m away at lower frame rates).

A separate test in the lab captured a subject's speech from 3 m away, with measurements done from the forehead. This test was distinguished by the wavelength of the illumination source—an IR laser emitting at 915 nm, which is invisible to the human eye.

REFERENCE

- Z. Zalevsky et al., Optics Express 17(24) p. 21566 (Nov. 23, 2009).

About the Author

John Wallace

Senior Technical Editor (1998-2022)

John Wallace was with Laser Focus World for nearly 25 years, retiring in late June 2022. He obtained a bachelor's degree in mechanical engineering and physics at Rutgers University and a master's in optical engineering at the University of Rochester. Before becoming an editor, John worked as an engineer at RCA, Exxon, Eastman Kodak, and GCA Corporation.