Neuroscience/Optogenetics: Optogenetics a cornerstone of DARPA's neural interface program

The Neural Engineering System Design (NESD) program, launched in 2016 to facilitate precision communication between the brain and the digital world, has taken a giant step: In July 2017, its founding agency, the Defense Advanced Research Projects Agency (DARPA), awarded $65 million to help realize this idea over the next four years. Of the six teams chosen to help create and demonstrate the high-resolution neural interface, three are pursuing optical technologies as part of an implantable system that promises a foundation for future therapies to restore sensory deficits. And optogenetics features prominently in each of these.

DARPA, the U.S. Department of Defense agency responsible for emerging technologies to aid the military, aims for an interface that would significantly boost the conversion of neurons' electrochemical signaling into binary code. The awards cover both fundamental research (to deepen understanding of how the brain simultaneously processes hearing, speech, and vision) and biocompatible technologies to efficiently interpret neuronal activity.

Reading, writing, and neurons

In one project, a team led by the University of California, Berkeley's Ehud Isacoff received $21.6 million to develop a novel "light field" holographic microscope that will detect neuronal activity in the cerebral cortex, and use light to modulate neurons by the thousands, or even millions, with single-cell accuracy. The ultimate goal is to enable physicians to "read from" and "write to" the brain—in the meantime, the team is working on quantitative encoding models to predict neuronal response to external stimuli, both visual and tactile. The researchers will apply their predictions to structure light-stimulation patterns designed to elicit specific responses. Encoding perceptions into the cortex could enable those with blindness to see or those with paralysis to feel touch, and promises the closed-loop input-output needed for complex control of artificial limbs, for instance.

To enable communication with the brain, the team will genetically modify neurons to fluoresce when action potential fires, and to respond to light pulses.

To read these neurons, the researchers are creating a device that mounts to an opening in the skull. The device is a miniaturized version of a microscope Isacoff has used to read from and write to thousands of neurons in the brain of a larval zebrafish. In developing it, the researchers will leverage compressed light-field microscopy (based on work by Ren Ng, and developed in the labs of Laura Waller and Hillel Adesnik) to capture images using a flat sheet of lenses that allows focusing at all depths. Able to reconstruct images computationally in any focus, it can simultaneously visualize and monitor the activity of up to a million neurons at various depths.

For the writing component, the team is developing a means to project light patterns using three-dimensional holograms—stimulating large numbers of neurons in a way that reflects normal brain activity—at multiple depths under the cortical surface. They will use a spatial light modulator developed by Valentina Emiliani at the University of Paris, Descartes.

The microscope and modulator will fit inside a cube small enough (at 1 cm) to be carried comfortably on the skull. The device will direct light with precision sufficient to hit one cell at a time, and "drive patterns of activity at the same rate that they normally occur," Isacoff says.

In addition to UC Berkeley professors, the work involves researchers from Lawrence Berkeley National Laboratory, Argonne National Laboratory Bionics Institute, Boston Micromachines Corporation, the Allen Institute for Brain Science, and other subcontractors that will help the project by providing funds for design assistance, prototyping, and fabrication.

Translating imagery direct to the brain

Roughly $25 million of the NESD program goes to Cortical Sight, an international consortium led by Jose-Alain Sahel and Serge Picaud of France's Institut de la Vision to develop a system able to restore vision by optogenetic stimulation of the visual cortex. With the CorticalSight system, implanted electronics and micro-LED optical technology will facilitate communication between a camera and neurons in the higher areas of the brain, and thus induce visual perception while bypassing damaged retinal ganglion cells (photoreceptors).

The camera attaches to eyeglasses and serves as an artificial retina, filming the live environment in high resolution. The brain implant will use algorithms to transform this visual information into light signals that can be interpreted by neurons in the visual cortex made light-sensitive by expression of a microbial opsin. The brain then does its usual work of translating the visual perceptions into mental images.

The CorticalSight consortium also involves the Pittsburgh School of Medicine, Stanford University, Friedrich Miescher Institute for Biomedical Research, the French Alternative Energies and Atomic Energy Commission-Leti, and companies GenSight Biologics, Chronocam, and Inscopix.

A prosthetic visual cortex

Finally, Vincent Pieribone of the Yale University-affiliated John B. Pierce Laboratory (New Haven, CT) will lead a project to study vision and to develop an interface system in which neurons, modified to respond to light stimulus and capable of bioluminescence, communicate with an all-optical prosthesis for the visual cortex.

As part of this effort, a team from Rice University (Houston, TX) will receive $4 million over four years to create an optical hardware and software interface that will detect signals from neurons genetically modified to produce light when active. Caleb Kemere, Assistant Professor in the departments of Electrical and Computer Engineering and Bioengineering, explains that some NESD-funded teams are investigating devices with thousands of electrodes to address individual neurons, but are "taking an all-optical approach where the microscope might be able to visualize a million neurons."

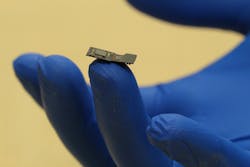

To make the neurons visible, the Pierce Lab is working to program neurons with proteins that release a photon when triggered. And the Rice team is developing a microscope (FlatScope) small enough to fit comfortably between the skull and cortex, and capable enough to capture three-dimensional images (see figure). It will transfer signals between a computer and up to millions of neurons, while software algorithms handle decoding and triggering.

About the Author

Barbara Gefvert

Editor-in-Chief, BioOptics World (2008-2020)

Barbara G. Gefvert has been a science and technology editor and writer since 1987, and served as editor in chief on multiple publications, including Sensors magazine for nearly a decade.