Modern ray-tracing software simplifies virtual prototyping

Starting with an optical-design prescription, engineers can quickly build a virtual prototype of an optical system that simulates the actual hardware. Unfortunately, many designers delay building a software model early in the concept phase because of a perception that models are complicated to construct—and others cite poor results from previous attempts at virtual prototyping.

The former concern may have been valid with older, script-based software. But modern optical-engineering software has been strongly influenced by CAD software and consequently is more visual and intuitive to use, which leads to shorter model development times. Several optical-software packages can generate almost photo-realistic renderings of a system, which can be very helpful in educating others such as investors and clients.

With regard to the concern about poor results from previous attempts at prototyping, our experience is that careful modeling involving at least reasonable surface and source properties will ensure a reasonably high level of agreement with the performance of the actual hardware. Nevertheless, the power of modern optical-design software is both a blessing and a curse. While a professional optical designer with years of experience will quickly determine that something is amiss, the less skilled designer is inclined to "push the button" and trust wholeheartedly in a model that may have been constructed incorrectly.

Assigning surface properties

The most critical part of the virtual prototyping process occurs after the optical and mechanical designs have been integrated, when an engineer must assign the specular and scattering surface properties to all of the components in the system. It is generally the most error-prone step for several reasons. First, no one has considered the surface properties beyond the simple statement "let's paint it black." There are two general categories of black paints—specular (glossy) and diffuse (matte)—and a variety of surface treatments (textures) commonly in use such as anodized, bead-blasted, roughened, and so on. Because computer models simulate the surface finish with a scatter function, the engineer must locate the relevant scatter data for the surface treatments he or she intends to use.

Second, coating vendors are notorious for not revealing the coating prescriptions for their designs. Consequently, engineers frequently have to work with predicted or measured data supplied by the vendor, if available. If the vendor can provide data for operating conditions that overlap with how the coating will be used, everything is fine. But this doesn't always happen—we have seen instances in which the coating vendor provided the reflectivity data at normal incidence when the engineer intended to use the coating at 45° incidence. There are occasions, however when a "best guess" is the only option.

The bottom line is that, for accurate modeling, an engineer must have available measured data for his particular surface treatment and coating selections.

Creating the light-source model

Next to surface properties, characterization of light sources is the other significant error-prone step because light sources are often poorly characterized, if at all. Many source vendors publish only a cursory description of the radiant properties of their products—this is particularly true of filament sources manufacturers. Furthermore, sources can show lot-to-lot variations that can frustrate even the cautious engineer who insists on having a particular source characterized by a commercial measurement house. Even mounting and orientation can have an effect—gravity, for example, can deform the shape and location of a filament.

Our experience is that a physical model of the source, including the glass envelope, support stalk(s), anode and cathode, and so on, yields the best results because of the opportunity for light/structure interactions such as obstruction or refraction by a glass envelope. We've been less impressed with results based solely upon measured source radiometry (frequently distributed as ray data) because there are no light/structure interactions.

Verifying performance

After the model is complete and has been validated, the analysis portion of the job is almost anticlimactic. Rays from the source are traced nonsequentially through the optomechnical system and accumulated at the detector(s), where the appropriate analyses are performed. Nonsequential ray tracing is important here because it can uncover unintended or "sneak" paths.

While lens designers pride themselves on the efficiency of their merit functions (using as few rays as possible), system simulations are frequently conducted using "brute-force" algorithms in which millions of rays are traced—this is particularly true if an irradiance or intensity uniformity calculation is required. All optical-engineering software computes irradiance and intensity distributions by dividing the detector(s) into differential areas and accumulating the flux contained in each ray as it intersects a given differential area. Consequently, to reduce statistical noise (random variations in power across the detector), many rays must be traced.

If, however, the user is solely interested in the amount of power incident on the detector, comparatively fewer rays need be traced because a power calculation converges much faster than a uniformity calculation. Depending upon the specifics of a system, one can compute the amount of power reaching the detector with a few thousand rays, while computing the irradiance uniformity can require several orders of magnitude more rays.

Unexpected results

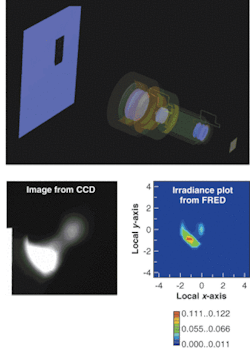

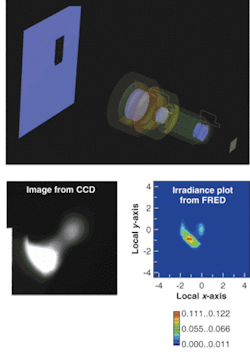

If the system does not perform as expected, today's software still comes to the rescue with a myriad of diagnostic tools designed to track down offending rays and their effect on the desired irradiance/intensity distribution (see figure). These tools include irradiance/intensity plots and ray-path tables. The former are far superior to traditional spot diagrams because the spot diagrams convey no information regarding the actual amount of energy represented by the distribution of rays. Spot diagrams are useful only when each plotted ray represents an equal amount of power so that the density of rays is proportional to irradiance/intensity. Virtually all refractive systems suffer from ghost reflections between surfaces, for example, but often the amount of energy contained in the ghost image is very small relative to the intended specular image. Qualifying a design through a traditional spot diagram would almost certainly overestimate the significance of the irradiance produced by ghost rays.

Ray-path tables (spreadsheets in some software) answer the important diagnostic question: How did energy reach the detector? While capabilities vary, most software can identify which objects were intersected prior to reaching the detector, the number of unique paths to the detector, on which objects ghost and/or scattered rays were generated, and so on. In some cases, the actual ray trajectory can be overlaid on the view of the system to illustrate precisely how light reached the detector.

Acknowledgment

The authors would like to thank Jim Michalski of Edmund Industrial Optics for his support.

RICHARD PFISTERER is president of Photon Engineering, P.O. Box 31316, Tucson, AZ 85751; e-mail: [email protected]. DANIEL DILLON is a development engineer at Edmund Industrial Optics, 6400 East Grant Road, Suite 100, Tucson, AZ 85715; e-mail: [email protected].