Miniature monocentric camera records details of scene while maintaining extremely wide field of view

San Diego, CA--A monocentric camera lens developed by researchers at the University of California, San Diego (UCSD) achieves the optical performance of a full-size wide-angle lens in a device less than one-tenth of the volume. In a monocentric lens, all lens surfaces have a common center of curvature, giving the lens spherical rotational symmetry: this means that there is no particular optical axis, the image field is spherical, and, barring lens misalignments and tolerance errors, all points in the field have exactly the same point-spread function (PSF).

If the lens is well designed with a good PSF, the whole field will automatically be imaged well. Various methods exist to deal with the curved image field: 1) live with it, 2) introduce a final non-spherically-symmetric element to flatten the field, but which also negates the monocentric properties, or 3) use a curved image sensor such as a fiber-optic bundle.

One pre-existing example of a monocentric lens is the "Gigagon" lens in the Gigapixel camera developed at Duke University (Durham, NC).

Pan and zoom with no moving parts

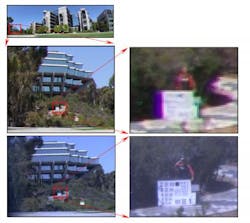

The new UCSD device can image anything between 0.5 and 500 m away in focus and has a 0.2 mrad resolution, equivalent to 20/10 human vision. Such a system could enable high-resolution imaging in micro-unmanned aerial vehicles (micro UAVs), or smartphone photos more comparable to those from a full size single-lens reflex (SLR) camera, say the researchers. Such cameras could pan and zoom with no moving parts.

"The major commercial application may be compact wide-angle imagers with so much resolution that they'll provide wide-field pan and 'zoom' imaging with no moving parts," says project leader Joseph Ford, a professor in the Jacobs School of Engineering at UCSD.

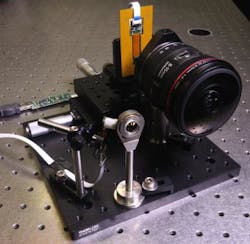

The UCSD researchers will describe their novel device at The Optical Society's (OSA) Annual Meeting, Frontiers in Optics (FiO) 2013, taking place Oct. 6-10 in Orlando, FL.Although researchers have tried to use monocentric lenses for high-resolution wide-angle viewing in the past, they ran into two main problems. First, they had trouble conveying the rich information collected by the lens to electronic sensors that could record the image. Ford's team addressed this problem using a dense array of glass optical fiber bundles that are polished to a concave spherical shape on one side so that they perfectly align with the lens elements' common center of curvature.

A second problem involved focusing. Researchers had expected that the fibers would have to move in and out to focus to different distances, or the lens would only provide perfect focus for a single direction. Ford's team showed that the changes in axial distance between fibers and lens did not distort the image.

In his talk, Ford will describe a prototype system with a monocentric lens with 12 mm focal length, making it ultra-wide-angle, and a single imaging fiber bundle connected to a 5 megapixel image sensor. Ford and his colleagues at UCSD and Distant Focus Corporation (Champaign, IL) are currently assembling a 30 megapixel prototype and plan to go even bigger in the future. "Next year, we'll build an 85 megapixel imager with a 120° field of view, more than a dozen sensors, and an f/2 lens -- all in a volume 'roughly the size of a walnut,'" he says.

The project is part of the DARPA-funded "SCENICC" (Soldier Centric Imaging via Computational Cameras) program.

About the Author

John Wallace

Senior Technical Editor (1998-2022)

John Wallace was with Laser Focus World for nearly 25 years, retiring in late June 2022. He obtained a bachelor's degree in mechanical engineering and physics at Rutgers University and a master's in optical engineering at the University of Rochester. Before becoming an editor, John worked as an engineer at RCA, Exxon, Eastman Kodak, and GCA Corporation.