Programmable optics turn sensors into perception platforms for LiDAR

Physical AI is no longer a futuristic concept—it’s rapidly becoming part of our reality. Robots are moving out of structured environments and into warehouses, streets, and shared human spaces. Autonomous systems are expected not only to perform simple tasks, but to operate safely within dynamic and unpredictable conditions. This shift is exposing a limitation in how sensing systems are designed.

For decades, LiDAR development focused on improving fixed specifications—more range, wider field of view, higher resolution, faster frame rates. And these improvements were largely applied uniformly across the sensor’s field of view. But it’s no longer good enough. The real world isn’t uniform and neither are the sensing requirements of physical AI systems.

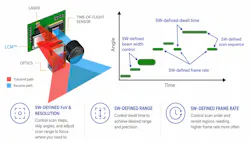

An autonomous mobile robot navigating close to the ground needs rapid updates near the floor, but not necessarily long-range performance in every direction. A robotaxi must maintain long-range awareness near the horizon while simultaneously detecting objects close to the vehicle. A humanoid robot alternates between locomotion, manipulation, and interaction—and each requires a different sensing profile. Physical AI needs not just better sensors but more adaptable ones (see Fig. 1).

A shift from fixed performance to software-defined LiDAR

Traditional LiDAR systems are fundamentally constrained by hardware-defined behavior. Every part of the scene is treated equally, whether it requires long-range precision or merely short-range awareness. This approach is inherently inefficient.

Instead of treating the scene uniformly, perception can be dynamically allocated by adjusting range, update rate, and signal density across the field of view. In practical terms: Near-field regions can be updated rapidly with lower range requirements, far-field regions can be prioritized for range and signal strength, and critical regions can be allocated higher precision and update frequency.

This is the essence of software-defined LiDAR: A sensing system in which performance isn’t fixed in hardware but configured in software based on context.

Changes at the optical layer

Software-defined LiDAR’s level of adaptability requires a fundamentally different way of controlling light (see Fig. 2).

Light control metasurfaces (LCM) enable fully electronic beam steering without mechanical motion. Unlike traditional scanning approaches, beam steering with LCM is nonsequential. The system doesn't need to sweep through intermediate angles to reach a target direction. It can jump directly from one angle to another, skip regions entirely, or dwell on a specific direction as needed. There is no inertia, no mechanical latency, and no need to follow a fixed scan pattern.

The ability to control beam width is equally important. A narrow beam concentrates energy into fewer receiver pixels, which maximizes signal per pixel and enables longer-range detection. A broader beam distributes this energy across more pixels to enable faster readout for regions where range is less critical.

It also directly controls how optical energy, time, and resolution are distributed across the scene to enable true programmability at the sensing level.

Rethinking field of view: Why 180° matters

At a surface level, the value of a 180° field of view is straightforward. Wider horizontal coverage reduces blind spots, improves situational awareness, and enables surround-view perception with fewer sensors. For mobile platforms, whether autonomous vehicles, industrial equipment, or robots operating in shared environments, this translates directly into safer operation and simpler system design.

The real challenge is achieving this in a way to preserve performance within a solid-state architecture.

LCMs enable wide-angle beam steering on the order of ~160°, which can be extended to 180° and beyond using relatively simple optics. Unlike traditional approaches, field of view isn’t tightly coupled to optical power and allows systems to expand coverage without inherently sacrificing performance.

The achievable field of view is also shaped by the receiver architecture. LCM-based systems align scanning with sensor readout to maintain efficient mapping between transmitted illumination and receiver pixels even at wide angles.

Mechanical systems can achieve broad coverage but introduce latency and inefficiency, and often revisit the same point only after a full scan cycle. Flash-based systems, on the other hand, distribute fixed optical power across the entire scene and reduce signal per pixel as field of view increases.

LCM-based systems avoid both constraints and maintain wide coverage while directing optical energy where it is needed. A practical example is a side-mounted sensor on a vehicle, where the field of view can be segmented into a near-field ground region for curbs and close obstacles, a horizon-aligned region for long-range detection, and an upper region for overhead structures. Each region can be sensed differently, so beam width, dwell time, and update rate can be allocated accordingly.

Another advantage comes from scanning in the vertical direction to allow the system to observe the entire horizon simultaneously rather than sequentially. This improves tracking continuity and reduces the likelihood of missing fast-moving objects.

Frame rate is a resource, not a constraint

Frame rate is typically treated as a fixed system limit. In practice, it should be a controllable resource.

With LCM-based LiDAR, it can be achieved by dynamically controlling how the scene is illuminated. By widening the beam, multiple receiver rows can be illuminated simultaneously to reduce scan time and enable higher effective frame rates.

At the same time, the system can control three key parameters: Beam width (how much area is illuminated at once), dwell time (how long the system remains at a given angle), and scan frequency (how often a region is revisited).

Wide beams enable higher update rates with lower range, while narrow beams concentrate energy for long-range detection. These modes can be applied simultaneously across different regions of the field of view. For this model, frame rate is no longer a fixed constraint and the system can allocate it intelligently based on the needs of the scene.

These capabilities represent a shift from fixed-function sensors to adaptive sensing platforms. Instead of designing around hardware limitations, systems can now shape perception in real time to align sensing behavior with the demands of autonomy. This will allow LiDAR to evolve from a passive input into an active, responsive component of physical AI.

About the Author

Apurva Jain

Apurva Jain is senior vice president of product for Lumotive (Seattle, WA).