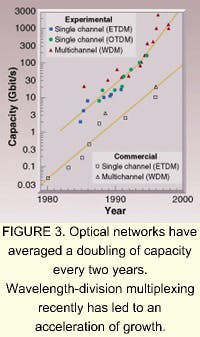

It is estimated that, worldwide, optical fiber is being installed at the rate of 2000 miles per hour. Transmission bandwidths exceeding 1 Tbit/s have been demonstrated in the laboratory, and systems with an aggregate bandwidth of 400 Gbit/s are being installed. While early developers of laser diodes had the foresight to predict their enormous potential for communications, the pace of development in the last decade, in particular, has outpaced the most optimistic forecasts.

The story of optical communications is in large part the story of the development of fiberoptics. It was by no means clear at the outset that fiberoptics would become the backbone of optical communications. Numerous early tests of communication using lasers were simply line-of-sight. However, “free space” as a communications medium at visible wavelengths suffers from the phenomena that obscure ordinary vision—rain, smoke, and the like. Developers turned to enclosed systems to guarantee transmission.

A diet high in fiber

At the time that laser diodes were being invented, fiberoptics had been in use for more than a decade in medical applications. These medical probes provided the foundation for modern fiber design (see Fig. 1). The first optical probes used to view the interior of the stomach were inspired by a demonstration of sword swallowing, and were just about as safe. Flexible bundles of optical fibers arose from demands by doctors for safety and comfort, but, to preserve the transmission of light by total internal reflection, the individual fibers in the bundle needed to have cladding.Cladding the fibers prevented light from leaking out where the bundled fibers touched each another. The idea of wrapping each fiber with glass of a slightly lower index of refraction than the fiber core was the inspiration of Brian O'Brien at the University of Rochester (NY) in the early 1950s and derived from his studies of the way rods and cones in the eye transmit light back to the retina. With flexible optical cables in hand, endoscopy became a commonplace medical tool in the 1960s.

Optical fiber has significant advantages as a medium, aside from the increased bandwidth that it carries. Optical cable is at least ten times smaller and lighter than copper and coaxial cable of similar capacity. It does not corrode, is unaffected by electrical interference, and, at least with current technology, is immune to security breaches (“bugging”).

A challenge met

However, fibers that were satisfactory for medical use, where an image travels a few meters, were far from useful for communication over kilometers. The clearest fibers available in 1965 had a loss of 1000 dB/km (that is, 0.1% transmission). Even if laser light of sufficient intensity had been available to overcome these losses, input power in optical cable is limited to a few milliwatts by nonlinear effects in the fiber.

Network developers turned to alternatives that in hindsight seem unpromising, such as hollow tubes containing numerous focusing lenses made of gas, or abandoning optical frequencies altogether and using microwaves in waveguides. Picturephone, a video telephone system that AT&T demonstrated at the New York World’s Fair in 1964, relied on this latter technology and was described as nearly ready for commercial release.

But improvements in fiberoptics would soon render competing technologies obsolete. Much of the credit for these developments belongs to Charles Kao, a researcher who predicted in 1966 that fiber losses would be reduced to less than 20 dB/km, a value he calculated as the threshold for successful data transmission. Kao’s tireless work at Standard Telecommunication Laboratories in England, together with his evangelical zeal, prodded researchers worldwide.

Kao's vision was realized in 1970 by a team at Corning Glass Works (Corning, NY) that produced 200 meters of fiber with a loss of 16 dB/km, an achievement that many contemporaries had said was impossible. Coincidentally, this was the same year that the first continuous-wave, room-temperature diode laser was made at the Ioffe Institute in St. Petersburg. The pairing of these breakthroughs brought the development of optical-fiber-based networks to the forefront of development.

Overview of optical fiber

Fibers are formed from pure silicon dioxide doped to control the refractive index, typically with germanium or boron. The fibers are drawn from a preform of the core and cladding together, made in a rotating high-temperature furnace. A recent development in fiber manufacturing is the use of “sol-gel” technology. Known for more than 100 years as a means of forming glass without the use of high temperatures, sol gel takes its name from the gelling of a colloidal mix (the sol) into a vitreous system. Today it is used as means of precisely controlling minute levels of dopants in the fiber preform.

A modern fiber is 125 µm in diameter, but most of this is cladding, made thicker than optically necessary for ease of handling and protection of the core. The core itself is either 50 µm for multimode fiber or about 9 µm for single-mode fiber. The refractive index for multimode fiber is usually graded across the core diameter to reduce mode dispersion.

The cladding on the fiber core does more than maintain its total internal reflection. Unclad fiber would need to be no more than about 0.2 µm in diameter to constrain light to a single mode. By altering the boundary conditions of the optical waveguide, the cladding allows the use of a much larger fiber for single-mode transmission than would otherwise be possible. Present day single-mode fiber cores, surrounded by a cladding layer about 1% lower in refractive index, are more than 40 times thicker than the microscopic unclad diameter.

But in the 1970s coupling light into a fiber 10 µm in diameter was not a practical option for commercial installation. Multimode transmission provided sufficient bandwidth for early applications, and the 50-µm fibers were large enough to couple light from the source laser. Multimode fibers were combined with the greatly improved 850-nm laser diodes to form the first optical networks. The first public optical network was installed in Long Beach, CA, by GTE in 1977.

Submarine leads the way

In the 1970s, companies whose business was providing long-distance communication via submarine coaxial cables began losing revenue to new satellite-based providers. Desperate to keep up, they turned to fiberoptic cable to regain the edge in system performance. Underwater cable, stretching over thousands of kilometers and expensive to install and repair, is a demanding application that has led to major improvements in optical network technology (see photo at top of this page).

The length of the fiber needed for submarine application requires single-mode transmission. Multimode pulses broaden in fiber due to the frequency dispersion of the pulse spectra by the fiber glass. Over a long distance, the data pulses will overlap, with resulting loss of information unless transmission speeds are limited. Single-mode transmission nearly eliminates this restriction.

The difficulties in coupling and connecting single-mode fiber were less of a consideration for submarine cable, which is preassembled before going shipboard. In addition to single-mode fiber, early submarine installations also made use of the newly developed indium gallium arsenide phosphide (InGaAsP) laser diodes at 1300 nm, made specifically to take advantage of the minimal dispersion and 0.5-dB/km loss of silica at that wavelength (see Fig. 2). At this level, glass a kilometer thick is as clear as a window. The new lasers reduced the number of expensive repeaters required to boost signals across the ocean.Sharks and hydrogen

After years of extensive tests, numerous submarine fiber cables were installed during the 1980s. The underwater cables were not without their own peculiar problems, however. Sharks, attracted by the current fed to the repeaters, bit into the cables. More of a concern was an early and mysterious deterioration of the fiber, which turned out to be the result of hydrogen generated from the electrolysis of seawater interacting with phosphorus in the fiber glass. Removing the phosphorus eliminated the problem.

The demands of the submarine application led to still another generation of optical networking with the installation of 1550-nm systems. Silica loss is lowest at this wavelength, typically around 0.2 dB/km, and the dispersion minimum at 1300 nm can be shifted to the longer wavelength by doping the fiber. New laser diodes were again developed specifically for this fiber window, which also coincided precisely with the technologies that made wavelength-division multiplexing (WDM) possible.

Driving the next generation

The development of high-performance PCs, including systems that were readily adapted as network servers, combined with the explosive growth of the Internet in the 1990s to cause an exponential increase in telecom traffic.

Network capacity would have been overwhelmed but for the timely development of WDM and its associated technologies, such as optical amplifiers (see Fig. 3).Wavelength-division multiplexing is evolving from a means of increasing the capacity of an installed fiber to the basis for future all-optical networks. Current networks use numerous subsystems that must convert light to voltage for such basic functions as routing traffic. These points of conversion are the main bottleneck for the infrastructure—for example, where the network finally reaches into the home. The next-generation network will use optical components instead, with an anticipated increase in data flow that has led some observers to predict that the communications revolution is only just beginning.

ACKNOWLEDGMENTS

We would like to thank the following individuals and organizations for providing images used in the Semiconductor Lasers 2000 series timeline: Michael W. Davidson, Florida State University; GE Research and Development Center; Zhores I. Alferov; Nick Holonyak, University of Illinois; Dan Botez, University of Wisconsin-Madison; Lucent Technologies, Bell Labs; Connie Chang-Hasnain, University of California Berkeley; Nichia Chemical Corporation; Jack Jewell, Picolight Corp.-Ed.

Many of the historical details of this article are drawn from City of Light by Jeff Hecht, Oxford University Press.

Next month the series will describe how WDM and associated technologies allowed optical networks to keep pace with the growth of the Internet.

About the Author

Stephen J. Matthews

Contributing Editor

Stephen J. Matthews was a Contributing Editor for Laser Focus World.