Optical fiber-based TuLIPSS captures ‘snapshot’ hyperspectral images

Conventional hyperspectral imaging systems use a “whiskbroom” (point-by-point) or “push-broom” (line-by-line) scanning technique. However, many “snapshot” systems have been developed using parallel acquisition to obtain spatial and spectral data simultaneously. Compared to scanning methods, snapshot imagers can minimize motion artifacts and record fast-changing events using high-speed data analysis.

Despite the popularity of snapshot hyperspectral imagers, these systems often use filter-based optics that divide the illumination of the entire scene, reducing signal-to-noise ratio and imaging resolution. To combat this drawback, scientists at Rice University (Houston, TX) developed a hyperspectral snapshot imager with the highest optical fiber count to date, consisting of a custom fiber configuration with a 6 × 6 mm input area, roughly 25 × 13 mm output dimension, and 100 mm length.1 Dubbed TuLIPSS (tunable light-guide image processing snapshot spectrometer), the device can capture data across the visible and near-infrared spectrum.

Distributing spatial information

The input to the hyperspectral imager consists of 90 × 100 multicore fibers (36 cores in each fiber arranged in a 6 × 6 block) that are organized to have 45 × 200 fibers at the output with 500 µm gaps between adjacent rows. Overlapping of the cores in the multicore fiber bundles is avoided by coupling a subset of the cores in the fiber bundle with a lenslet array or photomask.

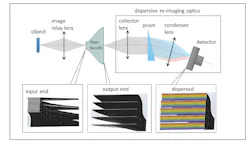

In the imaging setup, the scene is sampled at the input end of the densely packed fiber bundle (see figure). But at the output, the fibers are realigned into rows with spaces between the rows (unlike conventional fiber-based systems that use a single row). This output is then imaged such that the detector captures both spectral and spatial content, with the upper limit of the acquired data cube limited only by the number of pixels on the detector. Adjustment of the gap space between the fiber rows at the output allows a tradeoff between spectral and spatial sampling in the 3D datacube.

The fiber bundle collects more than 30,000 spatial samples and 61 spectral channels in the 450–750 nm range that are split by prisms into their component bands and fed to the detector. Software then recombines the data into desired images or spectra.

Spatial calibration of the system was achieved using a technique that originated from phase-shift interferometry, while spectral calibration was accomplished using a series of wavelength-specific filters and a lookup table to correlate the imaging fibers to their spatial pixel location.

For proof of concept, the Rice scientists analyzed spectral images of campus trees to identify their species and also analyzed the health of various plants through their spectral signature. In addition, the snapshot imager obtained continuous-capture images of moving traffic in Houston, and easily identified moving traffic and changing traffic lights with negligible image blur compared to the stable background. Imaging resolution and frame rate were comparable to commercial spectrometers, but with an output imaging dimension of only 25.3 × 12.5 mm that is easily reconfigured to different dimensions and fiber counts according to the desired application.

REFERENCE

1. Y. Wang et al., Opt. Express, 27, 11, 15701–15725 (2019).

About the Author

Gail Overton

Senior Editor (2004-2020)

Gail has more than 30 years of engineering, marketing, product management, and editorial experience in the photonics and optical communications industry. Before joining the staff at Laser Focus World in 2004, she held many product management and product marketing roles in the fiber-optics industry, most notably at Hughes (El Segundo, CA), GTE Labs (Waltham, MA), Corning (Corning, NY), Photon Kinetics (Beaverton, OR), and Newport Corporation (Irvine, CA). During her marketing career, Gail published articles in WDM Solutions and Sensors magazine and traveled internationally to conduct product and sales training. Gail received her BS degree in physics, with an emphasis in optics, from San Diego State University in San Diego, CA in May 1986.