Imaging colorimetry keeps color correct

Camera-based colorimeter maintains color and brightness uniformity of large LED video displays.

J. Scott Harris, Kevin Chittim, and Bassam D. Jalbout

Large video screens based on light-emitting diodes (LEDs) are now a common sight at many sports and concert venues. However, producing these displays presents several challenges. One significant issue is that unit-to-unit variations in color and brightness of individual LEDs make it difficult to construct a large-area display that exhibits uniform output. In addition, the aging characteristics of LEDs lead to changes in display performance over time. Imaging colorimetry can be used to automatically correct display output during construction and subsequent field maintenance.

Display construction

The advent of high-brightness red-, green-, and blue-emitting LEDs has made them an attractive source for large displays. Furthermore, the cost and performance characteristics of LEDs continue to improve with intensive ongoing development. LSI SACO Technologies has been producing its Smartvision line of large LED video displays since 1995. Notable emplacements include the NASDAQ MarketSite in Times Square, NY, (the largest video screen in the world) and numerous sports facilities, including the Cincinnati Reds, Baltimore Ravens, Edmonton Oilers, and others.

The Smartvision LED screens are composed of a mosaic of modules, called “blocks.” These blocks consist of a plastic substrate onto which individual LED units are mounted. Typically, each display pixel comprises a red, green, and blue LED (see Fig. 1). Blocks having a variety of pixel layouts are produced to support different configurations. Common block pixel dimensions are 16 × 16, 8 × 16, and 12 × 48. Blocks having different pixel spacings are also produced to match the needs of a specific venue. Specifically, pixel pitch is usually chosen so that the angular separation between adjacent pixels is about 2 mrad at the intended viewing distance. This requirement leads to pixel spacings that are typically in the 3 to 25 mm range.

Each block contains its own control electronics that enable analog and digital control of drive current for every single LED. In operation, the DC drive current is set to produce the maximum desired brightness for that emitter under the particular conditions of use. This DC level will be higher under high-ambient-light conditions (such as outdoors in bright sunlight), and lower for indoor venues or nighttime applications. The brightness variations necessary for image display are then achieved with pulse-width modulation-during each cycle of the display, a given emitter will be turned off for the amount of time necessary to reduce its perceived brightness by the required amount. This approach enables true 12-bit color display to be achieved regardless of the DC drive level.

The final assembled display can use virtually any combination of blocks, and the flexibility of the plastic substrate even permits curved surfaces. A typical indoor display might measure 9 × 16 ft, while outdoor displays can go up to about 90 × 27 ft. The overall display is driven by a master controller that takes the original input signal (NTSC, PAL, HDTV, composite video, and so on), de-interlaces it if necessary, and then delivers the appropriate signal to the controller of each individual block.

LED limitations

While the favorable electronic and optical characteristics of LEDs make them an attractive source for displays, they do present some drawbacks for the screen manufacturer. LEDs produced in high volume, for instance, lack unit-to-unit consistency in both brightness and center wavelength (the typical tolerance is ±20 nm). In the past, producing a display having good spatial output uniformity necessitated using only emitters with output confined within a certain range of values. This process of selecting LEDs that meet certain output criteria is called binning. For very large displays (such as the Times Square screen that has 22 million LEDs) binning is quite demanding.

Another LED characteristic is that the output wavelength shifts with drive current-which means that as the DC drive level is changed to accommodate ambient conditions, the display color shifts. In addition, LED luminance at a given drive current gradually degrades over time. Simply increasing drive current to correct for this change introduces the aforementioned wavelength shift. Furthermore, different materials are typically used to construct red, green, and blue LEDs, so age-related changes do not occur in all three colors at the same rate.

For maximum economy and flexibility, manufacturers would like to be able to order the smallest possible number of different LEDs and then utilize them in all their products, while still achieving good panel uniformity and unit-to-unit consistency. The time and expense associated with binning is also undesirable. In addition, it would be advantageous to be able to put replacement panels in existing screens without seeing output differences between the new and the old blocks (thereby avoiding a “tiled” appearance).

Color measurement

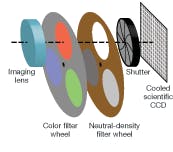

Performing LED screen correction in a practical timeframe requires an instrument that can simultaneously measure the individual luminance and color characteristics for every pixel in an entire block or display at once. An imaging colorimeter can accomplish this. Its main functional elements are an imaging lens, a series of CIE (International Commission on Illumination; see www.cie.co.at/cie) calibrated color filters, a shutter, a CCD camera, and data-acquisition and image-processing hardware/software (see Fig. 2). In operation, sequential images of the subject are taken through the three filters. The exposures are then combined and processed to yield chromaticity and luminance values for each pixel in the image.

The performance of an imaging colorimeter depends upon device construction, operating parameters, and calibration techniques. Large-screen display-correction applications in particular require low-noise measurements with high dynamic range and good color accuracy.

Measuring color in a way that correlates well with human visual experience requires working in a calibrated color space, such as those defined by the CIE. This, in turn, necessitates making measurements with detectors whose response closely matches the CIE-defined x, y, and z tristimulus curves. In practice, this matching is achieved with a high degree of accuracy using external color filters. There are CCDs produced with color filters directly integrated on to the sensor but the response of these filters is a poor match with the CIE curves, which renders them unsuitable for high-accuracy colorimetry.

Typically, 12-bit dynamic-range data is required to adequately perform display color correction. The dynamic range of a CCD is defined as the detector’s full well depth divided by the read noise (both expressed in electrons). Since full well capacity is proportional to the physical size of the pixels, this means using a CCD with relatively large pixels. Read noise is minimized by slowing readout speed, and by actively cooling the CCD to lower the noise floor.

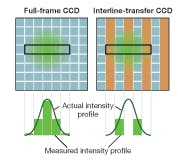

In practice, each LED in the display must be imaged on to several CCD pixels to achieve the required dynamic range and noise levels. Because of this, it is necessary to use a full-frame CCD, rather than an interline-transfer device. In interline-transfer CCDs, every other column is masked off, making it virtually impossible to properly image each LED on to several pixels as required (see Fig. 3).

Screen correction in action

LSI SACO utilizes a Radiant Imaging PM‑1400 imaging-colorimeter-based system to correct each block during manufacture. The colorimeter is coupled with software that enables it to drive the display, determine the correction factors, and then store them in the panel driver electronics.

In operation, the instrument measures the luminance and chromaticity of every LED in a panel, and then calculates a 3 × 3 correction matrix for each pixel. The matrix consists of the three scaling factors for each emitter for each of the three primary colors. For example, it might tell a given pixel that to display pure red (NTSC), it needs to drive the red, green, and blue LEDs at 60%, 22%, and 1% of their maximum brightness levels, respectively. This is done likewise for pure green and blue. In addition, these factors are derived for a variety of DC current levels, so that the display color output will be consistent regardless of the overall brightness level (see Fig. 4). This testing typically takes several minutes per panel.

The correction process also maps the output of the panel into the NTSC or HDTV color gamut space-necessary because the colors of the LEDs are quite different from those of the phosphors and filters used in TVs and flat-panel displays. Thus, if the input video signal calls for 100% red, this would display quite differently on an LED display than on an LCD display, unless the proper correction factors are applied.

The system is also used with LED video screens once installed at their venue-necessary to correct output shifts caused by LED aging, and may also be required when individual panels in a screen are replaced. For outdoor emplacements, this testing is performed at night because ambient sunlight is too variable (due to clouds and atmospheric conditions). In this case, the colorimeter is usually outfitted with a telephoto lens and utilizes a high-pixel-count CCD (typically 3072 × 2048) to image the entire display at once at the required spatial resolution.

J. SCOTT HARRIS is a senior engineer and KEVIN G. CHITTIM is vice president of sales and marketing at Radiant Imaging, 15321 Main St. NE, Duvall, WA 98019; BASSAM D. JALBOUT is executive vice president at LSI SACO Technologies, 7809 Trans Canada, Montreal, Quebec, Canada, H4S 1L3; e-mail: [email protected]; www.radiantimaging.com; www.smartvision.com.