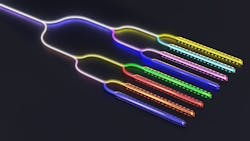

Intel Labs integrates eight-wavelength DFB laser array onto a silicon wafer

Intel Labs recently demonstrated an eight-wavelength DFB laser array, which is fully integrated onto a silicon wafer. This advance was achieved via existing manufacturing and process controls within Intel’s fabs—ensuring a path to volume production of next-gen copackaged optics and optical-compute interconnects at scale for network-intensive workloads, such as artificial intelligence (AI) and machine learning.

A key focus for Intel Labs’ research is optical communications, which is part of their integrated photonics work, and it involves three core pieces of their overall silicon-photonics effort.

These core pieces include “a light source or a laser, as well as being able to modulate it,” says James Jaussi, senior principal engineer and director of Intel Labs’ PHY Research Lab. “Another key piece is to amplify it, as well as to detect and receive the optical signal and convert it to an electrical signal on the CMOS chip. And the other key piece is CMOS electronics, which are used primarily to control or interface with the photonics—both in terms of modulation as well as detection and amplification within the electrical domain. These components are key to this vision, but what really brings this technology together is ability to integrate into a single package.”

Driver: A need for latency-minimal speed

As copper electrical interconnects continue to hit a performance wall, side-by-side integration of silicon circuitry and optics onto the same package is showing tremendous promise for future input/output interfaces with improved energy efficiency and longer reach.

AI and machine-learning applications require speed without latency glitches frequently caused by copper interconnects. Recent copackaged optics solutions tapping into dense wavelength division multiplexing (DWDM) technology are showing promise for increasing bandwidth while significantly reducing the physical size of photonic chips. But, until now, it’s been very difficult to produce DWDM light sources with uniform wavelength spacing and power.

Intel’s work ensures consistent wavelength separation of light sources while maintaining uniform output power—meeting a key requirement for optical-compute interconnects and DWDM communication. It also enables next-gen compute I/O using optical interconnects to be tailored for extreme demands of high-bandwidth AI and machine-learning workloads.

Design and fabrication

Intel Labs’ eight-wavelength DFB laser array is designed and fabricated on their commercial 300 mm hybrid silicon-photonics platform, which is also used to manufacture optical transceivers in volume, so it can scale easily.

“We’re pushing optical communications all the way to chip-to-chip communications,” says Haisheng Rong, a senior principal engineer for Intel Labs’ Photonics Research Group. “This requires a lot of components, if not billions of components, and the cost is prohibitive using traditional technology. We identified early on that silicon-photonics technology could enable this integration, so we’re integrating all optical components onto a silicon chip and fabricating it within our traditional CMOS fab.”

Compound semiconductor lasers are the key element enabling integration onto silicon. These laser materials, in particular indium phosphide (InP) and gallium arsenide (GaAs), have a direct bandgap that emits light very efficiently.

“As bandwidth increases, we want to put more wavelength into a single fiber,” says Rong. “This is why we wanted to make a laser array, each with its own wavelength. These lasers are densely packed in the sense of being on one chip, but also in terms of wavelength because we wanted them to be close together to pack in eight now, but maybe in the future we’ll want to pack 16 or 32 channels into one fiber to increase the bandwidth.”Intel is tapping into dense wavelength division multiplexing (DWDM) to create a laser array with eight lasers with very uniform wavelength spacings, which Rong points out is “very difficult” to achieve.

These lasers need three components: The first is gain, and III-V elements provide distributed feedback. Second is a pump for this III-V structure. And the third is a grating structure, which defines a laser’s wavelength and its pitch wavelength output.

A key goal is to minimize power consumption, because if the chip temperature changes all of the laser temperatures change and the wavelengths will move. This pattern moves left to right with temperature, and “for our architecture, it’s critical to keep wavelength spacing constant and not worry so much about absolute wavelength drift,” says Rong.

When they first got the laser back, the researchers turned one on, began taking measurements, and were happy to get the performance they expected. “We turned on another laser with spacing at about 200 GHz, which was also pretty cool, and then turned them all on and saw a very uniform spike in front of us,” Rong adds. “The power is also uniform, but as you go through this process the chip heats up a little bit and the Hall pattern moves slightly—but spacing stays constant.”

This latest integration work marks a significant advance for laser manufacturing within a high-volume CMOS fab, thanks to the same lithography already in use for manufacturing 300 mm silicon wafers.

For this work, Intel uses advanced lithography to define the wavelength gratings in silicon prior to a III-V wafer bonding process. The researchers say this technique results in better wavelength uniformity compared to conventional semiconductor lasers manufactured within 3- or 4-inch wafer fabs. And tight integration of lasers allows the array to maintain its channel spacing when the ambient temperature changes.

Next up

Intel Labs is also working on other core technology building blocks—including light generation, amplification, detection, modulation, CMOS interface circuits, and package-integration technologies.

Beyond this, Intel’s Silicon Photonics Products Division is implementing many aspects of its eight-wavelength integrated laser array into a future optical-compute-interconnect chiplet. This chiplet is being designed to offer power-efficient, high-performance, multi-terabits-per-second interconnects between compute resources including CPUs, GPUs, and memory.

About the Author

Sally Cole Johnson

Editor in Chief

Sally Cole Johnson, Laser Focus World’s editor in chief, is a science and technology journalist who specializes in physics and semiconductors.