PHOTONIC FRONTIERS: ELECTRONIC DISPERSION MANAGEMENT: Digital electronics clean up dispersion in high-speed fiber systems

If you haven't paid close attention to the cutting edge of fiber-optic transmission lately, you may have missed a seismic shift in design of high-performance fiber systems. Electronic digital signal processing has replaced optics in a fundamentally optical task, controlling the signal-degrading dispersion of light signals along the length of a fiber.

Optical dispersion has long been managed by assembling transmission systems from two or more types of fibers with different characteristic dispersion to keep total dispersion low and uniform across the operating wavelengths. That delicate balancing act could manage chromatic dispersion for wavelength-division multiplexed (WDM) systems using narrow-line lasers at optical channel rates to 2.5 or 10 Gbit/s. However, transmitting at higher rates of 40 or 100 Gbit/s required much tighter control of chromatic dispersion, plus management of polarization-mode dispersion (PMD), which had posed few problems at lower speeds. Developers turned to new optical transmission formats and powerful new digital processing electronics to tackle dispersion.

The dispersion problem

Three types of dispersion affect fiber-optic systems:

1. Modal dispersion arises from differences in light propagation times between different fiber modes; it is easy to avoid by using singlemode fiber.

2. Chromatic dispersion arises from refractive-index variation as a function of wavelength both in the glass (material dispersion) and in the fiber's waveguide structure (waveguide dispersion). The two add to give chromatic dispersion, measured in picoseconds per nanometer of source wavelength per kilometer of fiber. Wavelengths with high refractive index lag behind those with lower refractive index, causing chromatic dispersion that depends both on the fiber's characteristic dispersion and on the length of fiber traveled. Waveguide dispersion depends on the fiber's refractive-index profile, allowing designers to tailor dispersion properties of special dispersion-compensating fibers to offset those of standard transmission fibers.

3. PMD arises from fiber birefringence, which delays one polarization mode with respect to the other (see Fig. 1). Strictly speaking, PMD is the differential group delay between the two orthogonal polarizations. Birefringence in standard transmission fibers is small, so PMD went unnoticed until data rates reached gigabits per second. Unlike chromatic dispersion, PMD depends on forces applied to the fiber, so it varies with time and depends on the field environment. Total instantaneous PMD along a length of fiber is measured in picoseconds. The time-averaged characteristic value for a particular fiber PMD is given in picoseconds times the square root of fiber length. Excessive PMD increases bit error rates and can cause transient service outages.

Electronic dispersion compensation

Early fiber-optic systems used electro-optic repeaters that regenerated as well as amplified received signals. When optical amplifiers were introduced, special in-line fibers provided dispersion compensation and other distortion was corrected in switches or other terminal equipment. Dispersion-compensating fibers could balance chromatic dispersion reasonably well across the erbium-fiber-amplifier band for multi-gigabit data rates, but as data rates increased the system tolerance for pulse spreading decreased with the square of the data rate.

Electronic dispersion compensation was first demonstrated in the early 1990s. The upgrade of channel rates from 2.5 Gbit/s to 10 Gbit/s later in the decade increased interest in electronic compensation because the factor-of-four increase in speed reduced dispersion tolerance by a factor of 16. The first demonstrations of electronic dispersion compensation at 10 Gbit/s came at the end of that decade, with an analog to digital converter digitizing photodiode output with 3- or 4-bit resolution for subsequent processing.1,2 The compensators used the Viterbi algorithm—a standard signal-reconstruction technique—and application-specific integrated circuits (ASICs) to regenerate the original signal, reducing inter-symbol interference and opening the "eye" pattern of dispersion-corrupted signals.

After more analysis, the first prototype ASIC chips for dispersion compensation at 10 Gbit/s were produced in 2004. However, their attraction was limited because those systems used detection, which loses phase and polarization information, limiting the prototype chips to correcting only two or three bit intervals of pulse spreading.3 Moreover, in-line compensation allowed 10 Gbit/s systems to transmit a few tens of kilometers.

However, electronic technology was advancing rapidly, and electronic dispersion compensation was essential for the next step in channel speed, to 40 Gbit/s. Pulse spreading caused by chromatic dispersion increases with the square of the bit rate, so fiber that could transmit 10 km at 10 Gbit/s could send 40 Gbit/s only about 3 km. Moreover, electronic processing is a dynamic process that reacts to changes in PMD over time, unlike in-line dispersion control. That combination and the steady improvement in electronic processing tipped the scales toward electronic compensation at 40 Gbit/s.

Systems operating at 40 Gbit/s relied on direct detection, but instead of binary amplitude modulation used differential phase-shift keying (DPSK), with the receiver including an interferometer to detect a phase shift. One variation is differential quadrature phase-shift keying (DQPSK), a four-level code with 90-degree phase shifts. Another uses polarization modulation and phase-shift-keying to create a multi-level code. These multi-level codings improved dispersion tolerance at 40 Gbit/s, but electronic pre-compensation at the transmitter and post-compensation at the receiver were needed to meet transmission distance requirements. By 2007, electronic dispersion compensation was being used in 40 Gbit/s systems and was replacing optical compensation at 10 Gbit/s.4 But the cutting edge had moved to 100 Gbit/s.

The 100 Gbit/s challenge

Direct detection was not feasible at 100 Gbit/s, where chromatic dispersion had 100 times the impact it did at 10 Gbit/s, and PMD tolerance was only 1.5 ps rather than the 15 ps at 10 Gbit/s. Those problems forced a switch to coherent transmission using higher levels of phase-shift keying to squeeze more bits into the data stream.Developers had tried coherent transmission in the 1980s, but abandoned it because they could not find a practical way to frequency-lock the local oscillator to the received carrier signal. By the mid-2000s, digital ASICs were capable of recovering the carrier from the received signal for use in a coherent receiver. Crucially, coherent receivers preserve phase and polarization information that enhances electronic dispersion compensation. Multiple A-D converters digitize the analog receiver output for digital dispersion compensation. Chromatic dispersion can be inverted and mitigated without penalty by linear digital filtering. Digital processing also can invert the differential mode delay caused by PMD.5

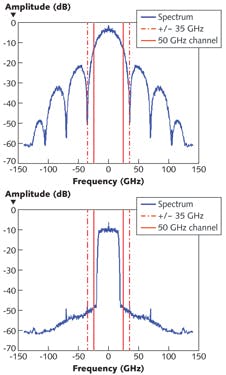

Coherent transmission at 100 Gbit/s also required other innovations. Spectral filtering of transmitter output, using powerful A-D converters, improves spectral efficiency, and together with the subcarrier modulation technique shown in Fig. 2 can squeeze 100 Gbit/s into a standard 50-GHz WDM optical channel. Powerful "hard decision" forward error correction can constrain bit error rate to the 10-15 or 10-16 levels required by carriers.

The first 100 Gbit/s coherent systems were deployed in 2009, and the ambitious technology has been a solid success. A key advantage for carriers is that electronic compensation makes the systems dispersion agnostic, so they can add 100 Gbit/s coherent systems to previously installed singlemode fiber cables without changing existing in-line dispersion compensation. The new coherent systems also can send traffic over new cables containing only standard singlemode fiber, with no in-line compensation.

Those added capabilities do not come for free. Development of a dedicated ASIC costs tens of millions of dollars, and the designs have to be updated as new chip geometries become available. That led the Optical Internetworking Forum (Fremont, CA) to develop standard specifications for modules like the 100 Gbit/s polarization-modulated QPSK (PM-QPSK) transceiver from Acacia Communications (Maynard, MA) shown in Fig. 3 along with flow charts.6

Outlook

Electronic dispersion compensation marks a new stage in the evolution of optical technology; the use of electronic technology to compensate for optical impairments. This hybrid approach plays to the strengths of both technologies—electronics for signal processing and optics for signal transmission.That combination will become increasingly important as data rates continue to climb. At the OFC 2013 postdeadline session, a team from TE SubCom (Eatontown, NJ) reported transmitting a total of 106 channels at 200 Gbit/s, each through 10,290 km of fiber. By detecting pairs of 200 Gbit/s channels simultaneously in single wideband receivers, they transmitted 53 400 Gbit/s channels through 9200 km. In addition to compensating for dispersion, they used digital back-propagation to compensate for optical nonlinearities.7 Those are important steps toward higher speeds using "superchannels" that integrate coherent signals on multiple carriers across a broad band. In May, a team from Bell Labs (Holmdel, NJ) reported using a powerful new technique called phase conjugation of twin waves to further reduce nonlinearities, transmitting a 400 Gbit/s superchannel a record 12,800 km.8 And in August 2013, Sprint (Overland, KS) and Ciena (Hanover, MD) reported field tests of 400 Gbit/s transmission on cables carrying live traffic in Silicon Valley.9 Those are impressive demonstrations of the power of the new hybrid approach.

REFERENCES

1. L. Möller et al., Electron. Lett., 35, 24, 2092–2093 (1999).

2. H. Bülow et al., Electron. Lett., 36, 2, 163–164 (Jan. 2000).

3. H. Bülow, F. Buchali, and A. Klekamp, J. Lightwave Technol., 26, 158 (Jan 1, 2008).

4. K. Roberts, "Electronic Dispersion Compensation Beyond 10 Gb/s," OFC, paper MA2.3 (2007).

5. K. Roberts, A. Borowiec, and C. Laperle, Opt. Fiber Technol., 17, 387–394 (2011); doi:10.1016/j.yofte.2011.06.007.

6 C. Rasmussen et al., "Real-time DSP for 100+ Gb/s," OFC, paper OW1E (2013).

7. H. Zhang et al., "200 Gb/s and Dual-Wavelength 400 Gb/s Transmission over Transpacific Distance at 6 b/s/Hz Spectral Efficiency," OFC, PDP5A.6 (2013).

8. X. Liu, A. R. Chraplyvy, P. J.Winzer, R.W. Tkach, and S. Chandrasekhar, Nat. Photon., doi:10.1038/nphoton.2013.109 (May 26, 2013).

9. S. Hardy, "Sprint, Ciena test 400G fiber-optic network link," Lightwave (Aug 2013); www.lightwaveonline.com/articles/2013/08/sprint-ciena-test-400g-fiber-optic-network-link.html.

About the Author

Jeff Hecht

Contributing Editor

Jeff Hecht is a regular contributing editor to Laser Focus World and has been covering the laser industry for 35 years. A prolific book author, Jeff's published works include “Understanding Fiber Optics,” “Understanding Lasers,” “The Laser Guidebook,” and “Beam Weapons: The Next Arms Race.” He also has written books on the histories of lasers and fiber optics, including “City of Light: The Story of Fiber Optics,” and “Beam: The Race to Make the Laser.” Find out more at jeffhecht.com.