In the early history of the laser, a common method of measuring peak power was to count the number of razor blades through which a pulse could burn. These units were called “Gillettes,” and they serve to illustrate how measuring the brightness of light, one of the most intuitive quantities for humans, has long been a problem for technology.

Measuring the instrument

Manufacturers of instruments that measure power and energy have taken pains to make them user-friendly (see Fig. 1). Complex optoelectronic measurements—such as bit-error rate, crosstalk ratio, and so on—can be obtained with a push of a button. But underlying all such complex relationships are fundamental measurements of energy, power, pulse duration, and wavelength. Understanding the physical details of these measurements is particularly essential at the boundaries of current technology—for ultrafast pulses, very high or low light levels, and very short or long wavelengths.

The capabilities of an instrument are specified by its resolution, repeatability, and accuracy. Resolution or sensitivity is the minimum quantity that can be discerned in a measurement. The noise present in an instrument during a measurement is a major factor limiting sensitivity. Repeatability quantifies how well an instrument can make one measurement, make a different one, and then return to repeat the first measurement. Accuracy is the ability of an instrument to make a measurement relative to an absolute standard.

As a rule of thumb, the accuracy of an instrument is about ten times greater than its repeatability. Accuracy typically is needed for the most demanding applications. Scheduling routine calibration is particularly important for accurate measurement of optical power and energy, as these instruments in general are more prone to drift over time than other types.

Rookie mistakes

Making a power measurement is often a simple process—just place a photodetector head into a laser beam and read the digital display from the power meter. This simplicity commonly leads to novice mistakes, however. The sensitivity of the detector can easily vary by ±5% or more over its surface. Even miniscule reflections from the detector surface back into the laser will cause power fluctuations.

Another commonly overlooked factor is the effect of transmissive optics on some measurements. And for low light levels or fast pulses, care must be taken to keep connecting cables short to avoid noise pickup or added capacitance, which slows dynamic response. The operation of other instruments, particularly when measuring low signal levels, can also cause spurious results.

Radiometry and photometry

Radiometry is the measurement of optical radiation at wavelengths between 10 m and 1 mm. Photometry is the measurement of light that is detectable by the human eye, and is thus restricted to wavelengths from about 360 to 830 nm. Photometry has an added layer of complexity—measurements are factored by the spectral dependence of human vision. Also, as a practical matter, displays and sources of illumination often have a spatial dependence not found in most nondiode laser sources.

Early measurements of light power compared what the eye perceived to the artificial sources then available. Modern units in the science of photometry are derived from this “standard candle” and are used in the characterization of displays and illumination. The candela is the fundamental SI unit of optical measurement, equivalent in kind to the kilogram and the second.

Most optoelectronic measurements use radiometric units of power and energy familiar from electrical measurements—watts, joules, and so forth. The lumen is the photometric analog of the watt. There are 683 lumens per watt at 555 nm, at which the spectral responsive of the human eye is a maximum (the luminosity curve is set to unity). At other wavelengths, the conversion is scaled to the standard luminosity curve.

The candela can be thought of as the product of lumens and solid angle. There exists a bewildering variety of photometric and radiometric quantities and associated units (Talbots, nits, and blondels, for example). Modern instruments are capable of displaying whatever units are best suited to the application, reducing the burden on the user.

Communication applications such as telecom often use the decibel (dB) to characterize the attenuation of signal transmission. The decibel is a logarithmic ratio of powers:

dB = 10 log (Psignal/Pref)

The negative sign in attenuation is ignored. When the reference is set to be 1 mW, the signal is then measured in "dBm" units (decibels relative to 1 mW) Decibels are useful units because system losses can be simply added.

The qualities of detectors

Important detector qualities such as spectral response, rise time, and sensitivity differ not only between types but also between different detectors of the same type. These qualities are also influenced by the design of the overall measurement system, including component specification.

Several parameters are used to characterize detector performance. Quantum efficiency—the number of electrons generated per incident photon—is a fundamental parameter that underlies the performance of many types of detectors. Signal-to-noise ratio, which will be discussed in next month's article, is the ultimate figure of merit for a detector.

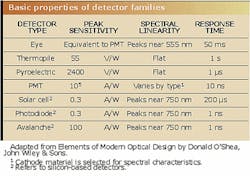

A discussion of the many types of detectors or of the details of any one design is beyond the scope of this series. Instead, we will summarize some of the properties of a few of the more basic devices (see table; see the 2001 Laser Focus World series, "Understanding Detectors," by Eric Lerner for a review of detector technology.)Thermal detectors

The first measurements of light were made using simple thermometers, and today thermal detectors are still the only practical way to measure some wavelengths and levels of energy. A thermopile consists of a heat-absorbing coated surface connected to a series of thermocouples. The junctions of the thermocouples alternate between a constant temperature reference and the coating (the more thermocouples in series, the greater the sensitivity of the thermopile). The voltage of the thermocouples is calibrated with a known light source incident on the coating.

Given a coating with linear absorption across the spectrum, the thermopile is an all-wavelength detector. Its sensitivity can handle from microwatts of continuous-wave power up to multijoule pulses, with excellent noise performance. The drawback of the thermopile is that it is very slow. The pyroelectric detector, on the other hand, while more expensive and complex, is a fast, broad-spectrum instrument.

The pyroelectric effect refers to the generation of a voltage by heating a ferroelectric crystal. This voltage is capable of producing a current proportional to the change in temperature, and hence to the power incident on the crystal. Since the voltage vanishes when the crystal reaches equilibrium at the new temperature, the signal light must be from a pulsed source, or chopped if the source is continuous.

Unlike semiconductor-based detectors, thermal detectors do not need to be cooled to work in the infrared. Miniature versions of these detectors, such as “microbolorimeters,” are being tested in military imaging applications.

Photomultipliers

Photomultiplier tubes (PMTs) were the first detectors to generate electrons directly from photons. The photoemissive element in the tube is the cathode, which is coated with one or more of a variety of alkali compounds. The spectral response of PMTs is determined at longer wavelengths by the photoemissive material and by the transmission of the window in the tube at the blue end.

Electrons emitted by photons striking the cathode are collected by a dynode, which emits two to four electrons for each electron captured. These in turn are captured by the next dynode stage. As many as ten or more stages may be included in a detector. The total amplification can exceed 106, enabling a PMT to detect a single ultraviolet (UV) photon while keeping the noise level very low.

In recent years the PMT has seen increasing competition from the avalanche photodiode (APD), particularly in the visible and infrared range. In response, PMT manufacturers have dramatically reduced the size and relative cost of their tubes. Photomultiplier tubes are now made that fit into a standard TO8 can, and require less than 15 V.

There are literally hundreds of different PMTs, with cathode materials and windows selected to cover portions of the spectrum in the visible and UV. This old technology is still performing in cutting edge applications—for example, PMTs are being used by SETI in its search for alien civilizations.

Photodiodes

The majority of power and energy meters use photodiode detectors. Photodiodes can be operated in either a photovoltaic mode (illuminating the diode produces a voltage) or a photoconductive mode (illuminating the diode produces a current). The photovoltaic mode is simpler, but unfortunately produces a slow and noisy detector.

While increasing the speed of the photodiode, the photoconductive mode may introduce other sources of noise. This illustrates a general difficulty in detector design—there is usually a tradeoff between speed and sensitivity. In the case of the photodiode, integrating the detector with a transimpedance amplifier enables a good balance of both traits.

As would be expected in semiconductor technology, there are highly sophisticated variations of the fundamental p-n junction design. As a simple example, the junction capacitance, which slows detector response, can be reduced by a layer of undoped or "intrinsic material" between the p and n layers, to create a pin photodiode. All but the simplest photodiodes in use today are pin detectors.

Other types of photodiodes

Photodiodes are made from different semiconductor alloys for sensitivity in different spectral regions. Germanium, for example, has a peak response in the near infrared, but it can be doped to produce detectors for wavelengths out to 40 μm. Photodiode detectors for longer wavelengths must be cooled to reduce noise.

The germanium photon-drag detector can handle the high average power of carbon dioxide (CO2) laser measurements at 10.6 μm. In this design, incident photons transfer their momentum to free carriers in the germanium crystal to produce a current proportional to light power. This detector has low sensitivity but offers a faster response time than some competing pyroelectric instruments.

The material of choice for telecom detectors is indium gallium arsenide (InGaAs), which covers the spectrum from 900 nm to 1.7 μm. While more expensive than germanium devices, these detectors offer lower noise and higher speed. The fastest detectors for use in long-haul telecom applications currently are 60-GHz InGaAs small-area photodiodes packaged with single-mode optical fiber.

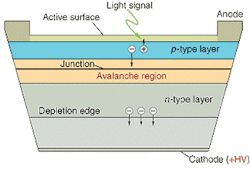

The APDs provide high sensitivity and fast response in the infrared, with the result that this detector has become a workhorse in telecom applications, although at a premium price. Its operating principal is the semiconductor equivalent of the PMT—a high bias voltage accelerates a photon-generated electron, which collides with the crystal lattice with enough energy to create additional electrons, which are again accelerated, resulting in a cascade effect and high gain (see Fig. 2).Mating detectors and instruments

Detector performance is the most critical factor in measuring power and energy, especially at low signal levels. However, instrument performance is critically dependent on the integration of the detector into the measurement system, which can greatly enhance the capabilities of the detector itself. For example, techniques exist for recovering accurate measurements even when the signal falls below the level of noise.

Next month the Test and Measurement series will examine how detectors are integrated into measurement systems and will discuss techniques for recovering low levels of signal.

About the Author

Stephen J. Matthews

Contributing Editor

Stephen J. Matthews was a Contributing Editor for Laser Focus World.