Exploring image inpainting for seamless restitution

AYUSH DOGRA, BHAWNA GOYAL, APOORAV SHARMA, VINAY KUKREJA, and RENU VIG

Image inpainting has been a vigorous area of research in the field of computer vision for many years, acknowledged for restoring damaged regions of an image by extrapolating from the surrounding pixels applicable in numerous technical fields. The most primitive work on inpainting dates back to the 1970s and researchers used unchallenging techniques such as nearest neighbor interpolation to fill in missing pixels.

Understanding image inpainting

An image processing approach aims to provide visual conceivable restoration of missing regions of an image or video. It also aims to confiscate the disfigurement caused due to noise, strokes, and text on the image, as well as to accurately fill up the omitted parts of an image (see video).1

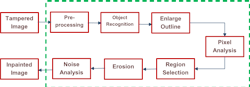

This method works by either considering the information from the surrounding pixels to predict what should be in the spoiled region, creating a seamless restoration of an original image or by replacing the damaged pixels with pixels analogous to the adjacent ones, therefore making them unremarkable and helping them blend well with the background. The basic functioning and the steps involved in the processing of image inpainting are shown in Figure 1. Furthermore, it restores old pictures that might have smashed edges or some stains on them. This has been executed digitally by using several techniques.

Assessing the effectiveness

To estimate the performance of the techniques available to implement image inpainting, numerous performance evaluators are available that indicate the effectiveness of the scheme. Demonstrating the mathematical foundation of peak signal-to-noise ratio (PSNR) that quantifies the difference between an original and an inpainted image with respect to pixel values, as well as structural similarity index (SSIM) that takes into account the luminance, contrast, and structure of images, and learned perceptual image patch similarity (LPIPS) measures the quality of reconstruction:

PSNR = 10 logl0 (m^2/MSE)

Considering ‘m’ to be the total number of pixels emitted to form an image and MSE is the mean square error computed by the mathematical equation:

MSE= (1/n * ∑ (y – y’) 2)

Here, y’ and y are the expected and real value and ‘n’ is total count of observations.

SSIM= (2*µa*µb+p1) * (2*σab+p2) / ((µa^2+µb^2+p1) * (σa^2+σb^2+p2))

Where µx and µy are the mean of a and b, σa and σb are standard deviation of a and b, σab is the covariance of a and b, and p1 and p2 are constants.

There are other metrics like mean absolute error (MAE), inception score (IS), and Frechet inception distance (FID) to assess the eminence of the inpainted image, depending upon the requirement and the relevance.

Versatility of image inpainting across various domains

Image inpainting applies to numerous industries such as medical imaging, film restoration, video game development, forensic science, and photo and video editing. In the film industry, it is used to regenerate affected frames of old films or to reject superfluous objects from scenes. Similarly, in video games, it creates seamless game environments by inserting mislaid parts of textures or backgrounds. It has wide range of technical applications in diverse fields and its potential applications are continually being explored upon.

In computer vision, images may contain unwanted objects, marks, or people that need to be removed, so image inpainting can eradicate them by filling in the specific regions with the surrounding texture (see Fig. 2), enhancing the resolution of low-quality images, and reconstructing the corrupted images.

Robotics includes a visual odometer, one of the major applications of image inpainting that estimates robot’s motion by analyzing changes in visual input from a camera mounted on the robot. However, this can sometimes be corrupted due to occlusions, motion blur that can be corrected using this technique and provide accurate estimation of robot’s motion. It also deals with the accuracy enhancement of 3D reconstruction, object detection, navigation and tracking, etc.

Image inpainting supports medical imaging, as it can retrieve the damaged portions of medical images such as x-rays, CT scans, MRIs, and PET scans that can help in accurate diagnosis and treatment of medical conditions. It removes the artifacts caused by medical equipment, and fills in the missing regions in the medical images. Its uses in medical imaging include:

- Image restoration: Medical images can be affected by various factors like noise, artifacts due to incomplete acquisition. It can help in restoring such images by filling missing information and removing noise, etc.

- Lesion segmentation: In medical science, lesions are difficult to segment due to their irregular shapes and sizes, so those regions can be filled to separate them from surrounding tissue, making it easier to segment.

- Data augmentation: This process is deployed to increase the size of the dataset and improve the generalization ability of machine learning models. Inpainting here is utilized to generate synthetic images by discarding certain parts of actual image.

- Visualization: Image inpainting generates realistic visualizations of medical images by reconstructing and stuffing the parts of an image that can be used in surgical planning as well as for patients’ education.

Forensics and criminal investigations practice image inpainting to recreate broken and distorted images, including fingerprints, footprints, and other categories of evidence. For instance, if a suspect in a criminal investigation tries to blur out their face in a surveillance video, image inpainting reconstructs the face based on the surrounding pixels. This can help investigators identify the suspect and bring them to justice. Moreover, it restores degraded images from crime scenes that may have been deliberately altered or damaged, providing valuable evidence in criminal investigations.

In the entertainment industry, image inpainting has use in developing special effects, removing wires and other objects that are visible in a scene, changing the appearance of actors, and the sets involved in video shooting. It can also be used to discard blemishes or unnecessary elements from a video clip such as logos, text, objects, or people, in turn enhancing the quality of videos for different purposes like advertisements.

With these advantages, image inpainting also exhibits some issues like complicated inputs, larger affected areas of the images, lacking uniqueness in results. There exist various deep learning strategies to provide solutions to such problems related to image inpainting. Accordingly, these algorithms are classified into structure-based, attention-based, convolution-based and pluralistic inpainting.3

The latest advances in image inpainting

More urbane methods like diffusion-based methods were commenced for managing diverse convoluted scenarios such as large holes and irregular boundaries. Texture synthesis aims to recover missing area. To create a texture from a given sample as shown in Figure 3 in such a way that the produced texture is larger than the source sample with a similar visual appearance.5 Image inpainting received a major boost with the introduction of exemplar-based inpainting that utilizes patch-based approach to cover up the mislaid regions of an image.

There have been advancements in using semantic information to guide the inpainting process that refers to information about the content of the image like object boundaries and semantic labels. Using this information, the network can better understand the structure of the image and generate more accurate inpainting results. This approach has been majorly applicable for object removal and background inpainting.5

Convolution-based inpainting utilizes mask to designate the affected portions of the image and manage the flow of information across numerous portions.4 In the case of damaged photos, every bit of the information is not constructive. Thus, traditional convolutions works on actual pixels and its substitutes sometimes lead to issues like color inconsistency and haziness. One of the most promising recent advancements in image inpainting is the use of generative adversarial networks (GANs), known to be deep learning models that can generate highly realistic images that are difficult to distinguish from real images.6

Conclusion

Thus, it has been observed that image inpainting is a fascinating and rapidly-evolving field that has undergone significant advancements over the years with broad applications in various industries. Since then, several techniques have been proposed, including patch-based methods, texture synthesis, and deep learning-based approaches. As the demand for high-quality inpainting continues to grow, researchers are exploring new and innovative methods to improve the accuracy and efficiency of the process. Deep learning approaches, in particular, have shown tremendous promise and are likely to play a significant role in the future of image inpainting.

REFERENCES

1. H. Xiang et al., Pattern Recognit., 134, 109046 (2023).

2. M. A. Qureshi et al., J. Vis. Commun. Image Represent., 49, 177–191 (2017).

3. A. Thakur and S. Paul, “Introduction to image inpainting with deep learning,” GitHub repository (2020).

4. B. Furht (ed.), Encyclopedia of Multimedia, Springer Science & Business Media (2008).

5. C Guillemot and O. Le Meur, IEEE Signal Process. Mag., 31, 1, 127–144 (2013).

6. S. Shete, S. Srinivasan, and T. A. Gonsalves, Plant Phenom., 2020, 8309605 (Aug. 3, 2020); doi:10.34133/2020/8309605.

About the Author

Ayush Dogra

Assistant Professor - Senior Grade, Chitkara University Institute of Engineering and Technology

Dr. Ayush Dogra is an assistant professor (research) - senior grade at Chitkara University Institute of Engineering and Technology (Chitkara University; Punjab, India).

Bhawna Goyal

Assistant Professor, UCRD and ECE departments at Chandigarh University

Dr. Bhawna Goyal is an assistant professor in the UCRD and ECE department at Chandigarh University (Punjab, India), and in the Faculty of Engineering at Sohar University (Sohar, Oman).

Apoorav Maulik Sharma

Research Fellow, Department of Electronics & Communication Engineering (ECE)

Apoorav Maulik Sharma is a research fellow in the Department of Electronics & Communication Engineering (ECE) in the University Institute of Engineering & Technology (UIET) at Panjab University (Chandigarh, India).

Vinay Kukreja

Professor, Chitkara University Institute of Engineering and Technology, Chitkara University

Vinay Kukreja is a professor at Chitkara University Institute of Engineering and Technology (Chitkara University; Punjab, India).

Renu Vig

Professor, Department of Electronics & Communication Engineering (ECE)

Renu Vig is a professor in the Department of Electronics & Communication Engineering (ECE) in the University Institute of Engineering & Technology (UIET) at Panjab University (Chandigarh, India).

![FIGURE 2. The damaged image (left) and inpainted image after applying an image inpainting algorithm (right) [1]. FIGURE 2. The damaged image (left) and inpainted image after applying an image inpainting algorithm (right) [1].](https://img.laserfocusworld.com/files/base/ebm/lfw/image/2023/06/Dogra_fig2.6499b20325f99.png?auto=format,compress&fit=max&q=45?w=250&width=250)

![FIGURE 3. Object removal (a), mask and inpainting results (b), a diffusion based approach (c), an exemplar-based method (d), patch sparse representation (e), and hybrid with one global energy minimization (f) patch offsets are shown [5]. FIGURE 3. Object removal (a), mask and inpainting results (b), a diffusion based approach (c), an exemplar-based method (d), patch sparse representation (e), and hybrid with one global energy minimization (f) patch offsets are shown [5].](https://img.laserfocusworld.com/files/base/ebm/lfw/image/2023/06/Dogra_fig3.6499b20347639.png?auto=format,compress&fit=max&q=45?w=250&width=250)