Long-wavelength Raman becomes rugged

The terms “dispersive” and “long wavelength” are no longer oxymoronic for describing Raman spectrometers. Low-cost components have made possible rugged, affordable near-infrared Raman instruments–which prove their mettle in food contamination testing.

By Eric Bergles, Brian Harrison, and William Yang

Biomedical and analytical professionals have long recognized the potential of long-wavelength Raman devices for enabling applications such as lab analysis, hospital-bedside, and portable field monitoring. Until now, however, Raman spectrometers based on stability-enabling dispersive technology were incapable of producing wavelengths beyond 810 nm. Practical components and technologies were simply unavailable. Further, Raman instruments were traditionally too big, too expensive, and too fragile for real-world use. And they were so sophisticated that highly trained operators were required to run them.

Advances in high-volume optical-telecommunications device manufacturing, however, have recently enabled new rugged, affordable devices–and applications such as rapid detection of melamine contamination.

Analytical chemists and vibrational spectroscopists know that although Raman spectroscopy is “color-blind” in terms of excitation laser wavelengths vs. Raman shifts, special attention must be given to the choice of an excitation laser. The laser wavelength (and power) must be matched to the target sample, and trade-offs such as laser availability, Raman detection sensitivity, sample damage, and fluorescence avoidance must be considered. Excitation wavelengths in the near-infrared, such as 1064 nm, offer many advantages for Raman measurement of highly fluorescent samples, especially biological samples such as tissue or skin. In both in vivo and in vitro methods, long wavelengths can reduce or eliminate fluorescence interference.

The need for long-wavelength Raman has generally been filled by Fourier-transform Raman (FT-Raman) devices, which have moving parts, are large in size, and are cumbersome to operate. Often they involve cryogenic cooling of photodetectors. Fourier-transform technology is based on a Michelson interferometer, which is susceptible to vibration or shock, especially when the reference mirror is moving. Therefore, Fourier-transform instruments typically have a relatively tight specification for base motion and acoustical vibration–and thus, they are unstable compared to a dispersive technology with no moving parts. Technologies developed during the telecom boom are now enabling detectors that overcome these limitations.

Enabling advances

During the past decade, technologies used in wavelength-division multiplexing (WDM) have enabled significant advances in devices used in other areas, especially for components in the wavelength range from 1000 to 1700 nm. From light sources to detection devices, the advancements enabled both improved reliability and lower cost. Four technology areas have made possible the miniaturization of longer-wave Raman spectral sensors: mini lasers and compact narrow and broadband light sources; holographic optical elements; low-cost, sensitive solid-state optoelectronics; and cheaper, faster computer chips.

Particularly useful for spectroscopy are transmission holographic volume-phase gratings; linear-array InGaAs image sensors; miniature lasers at 1064 nm; and solid-state computer chips to control, contain and store computer data. Telecom-grade components are now assembled into ultracompact, reliability-tested, spectral engines with no moving parts that can be battery operated and packaged in a handheld form factor.

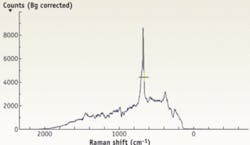

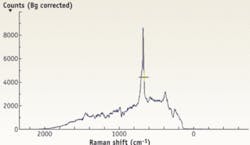

This type of product solves the motion and vibration issues of FT Raman, and enables practical longer-wavelength excitation measurements. An example is BaySpec’s supercooled Nunavut dispersive Raman spectrograph, based on a transmissive volume-phase grating (VPG) in conjunction with a deep thermoelectrically (TE) cooled InGaAs detector array. The instrument works at wavelengths from 1000 to 1700 nm (and can be extended to cover 850 to 2000 nm), or a range of up to 3200 cm-1 wave numbers.

Detecting melamine quickly, affordably

The recent discovery of melamine in the food supply presents particular challenges for health officials. Melamine’s presence can result from numerous sources beyond direct adulteration of human food stocks, and potential sources increase dramatically as you move further down the food chain. So once contamination is detected at the human consumption level, tracing it back to the origin can be difficult, laborious, and time-consuming. The most important detection point, however, is the level just before human consumption. Detection at this point requires a technique that is fast, accurate, cost-effective, consistent, and repeatable.

In the much-publicized recent case of milk contamination in China, it appeared that samples were watered down and melamine was added to mimic protein levels of unadulterated milk. Melamine levels were found to be in the hundreds to thousands of parts per million, which clearly eliminates inadvertent contamination lower in the food chain. The deaths resulted from the fact that melamine combines with uric acid to form kidney stones. To date, the USDA has not set official levels of concentration for melamine exposure in food for children, but the agency has set a maximum allowable level for adults of 2.5 parts per million (ppm).

Because of regional transportation challenges, much of China’s milk supply is in powder form. BaySpec’s Nunavut Raman system enabled detection of melamine concentration down to 3 ppm in powdered whole milk. Melamine diluted in water was also measured down to 0.1 ppm. Testing of melamine can be done in the field and takes less than 10 seconds.

For the first time, an affordable, accurate, and ruggedized spectral device in longer-wavelength excitation is helping to fulfill the promise of portable Raman spectroscopy.

ERIC BERGLES is vice president sales and marketing; BRIAN HARRISON is senior account manager, and WILLIAM YANG is principal technologist at BaySpec, 101 Hammond Ave., Fremont, CA 94539, www.bayspec.com. Contact Mr. Bergles at [email protected].

Spectroscopy basics

Four characteristics determine the capability of a spectrometer: spectral range, frequency bandwidth, spectral sampling, and signal-to-noise ratio (S/N). The spectral range should cover enough diagnostic spectral absorption to solve a desired problem, and a spectrometer must measure the frequency bandwidth with enough precision to record details in the spectrum. While the visible range has been primarily used, near-infrared spectra are increasingly being considered for higher-intensity first-overtone and combination regions.

Spectral sampling involves collection set-up components such as fiber-optic probes and cuvette holders. Sampling is closely related to the spectrometer design for optical throughput in low-light situations.

The S/N required to solve a particular problem will depend on the strength of the spectral features under study. The S/N is dependant on detector sensitivity, spectral bandwidth, and intensity of the light reflected or emitted from the surface being measured. While a few spectral features are quite strong and an S/N of only about 10 is necessary to identify them, for weaker features an S/N of several hundred (and higher) may be needed.

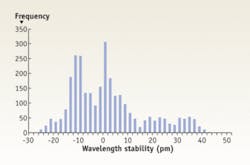

Repeatability is important for all spectrometers, and because portable and handheld devices don’t have the benefit of being attached to a PC for continual recalibration, they must be especially robust. Thanks to lot-to-lot consistency methods learned from high-volume manufacturing, compact spectrometers are able to offer effective repeatability.