How AI and other microscopy advances answer complex life science questions

The potential for artificial intelligence (AI) to greatly improve microscopy became evident to the wider microscopy community a few years back with the advent of content-aware image restoration, a technology that uses deep learning to generate high-quality images from lower-quality images. Now, AI is evolving rapidly to offer researchers even more advanced imaging and analysis capabilities that promise not only to save time and improve the accuracy of their work, but also to complete experiments that were previously impossible to conduct.

Among the developments are multiplexed imaging coupled with AI-based algorithms for cell mapping and autonomous microscopy, an AI-based workflow for confocal microscopy that can quickly segment and classify cells into groups and automate the detection of rare events (see Fig. 1). These capabilities can greatly enhance research on cell morphology, tumor microenvironments, and more. Moreover, the insights derived from AI-enabled microscopy are poised to improve drug discovery.

Promising developments

Multiplexed imaging can help oncology researchers characterize the tumor microenvironment, which can unlock new insights into cell-cell interactions and the behavior of immune cells. A 2021 study presented at the Society for Immunotherapy of Cancer (SITC) annual meeting demonstrated the power of this approach in analyzing pancreatic ductal adenocarcinoma.1 The researchers combined multiplexed imaging with single-cell analysis to probe the tumor microenvironment, allowing them to zero in on biomarker expression in the most aggressive regions of the tumor samples—to spatially define the contribution of the extracellular matrix, inflammatory cells, and cancer-associated fibroblasts.

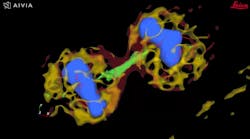

The advent of autonomous microscopy should simplify research centered around cell division, which is critical in several disciplines, including cancer biology, immunology, neurodegenerative diseases, and stem cell biology (see Fig. 2). The challenge in detecting dividing cells is that only a fraction of all cells divide at any moment (as little as 1% of all cells). So, in the past, researchers would image large samples at high resolution and then use offline software to find and count the dividing cells.

With autonomous microscopy, researchers can now take low-magnification scans of tissue samples and use AI to quickly locate the dividing cells. And that information can be looped back to the microscope, which can zero in on the exact locations of the dividing cells and produces high-resolution images (see Fig. 3). This approach improves the efficiency of the experiments, saving researchers significant time in finding and imaging the most relevant cells.

Drug discovery research can also benefit from autonomous microscopy in experiments using multi-well plates. A researcher can set up the microscope to initially image every well on that plate and focus its imaging based on the results. As the experiment proceeds and the drug affects specific cells, the autonomous microscope can automatically produce high-resolution images of only those cells of interest.

For example, a researcher is using a 96-well plate to test a drug designed to normalize the phenotype of cancer cells (back to a near-normal state). So, if 94 of the wells contain cells that still display the cancerous phenotype after being treated with the drug, an autonomous microscope could be set up to ignore them. If the other two wells have cells that become normalized (due to the treatment effect), the microscope can image them at high resolution and yield valuable information, such as which proteins expressed in the cells are affected by that drug.

Simplifying 3D reconstruction of brain cells

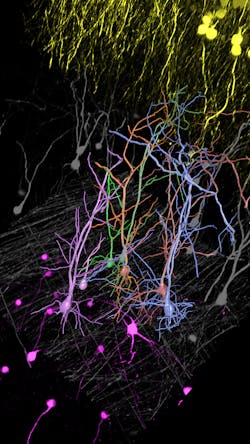

Autonomous microscopy could revolutionize the imaging of brain tissue. The brain contains approximately 171 billion cells (of which about 86 billion are neurons) that are densely packed into approximately 1260 cm3. Typically, researchers image the brain with fluorescent microscopy, selectively labeling a small percentage of neurons, which makes it appear neurons are hanging in space.

Researchers want to trace and fully reconstruct the shape of the visible neurons to better understand how information is processed, stored, and retrieved. It is technically challenging to achieve this, often causing researchers to correct errors manually. It’s both impractical and time-consuming.

Autonomous microscopy with advanced AI can be customized to perform accurate soma detection in neurological tissue, allowing researchers to automatically trace and assign the dendrites correctly. Autonomous microscopy also helps researchers reconstruct 3D representations of the neurons, allowing them to observe if the dendrites are straight or branching off and making connections with other neurons (see Fig. 4). They can also measure how far apart the neurons are from each other or reference points (e.g., a blood vessel or a cluster of glial cells).

Why does this matter? Most neurological diseases are characterized by both a molecular disturbance and an associated morphological abnormality. The morphology and cell count of an Alzheimer’s patient are significantly different from those of healthy patients. That’s why studying cell counts, the morphology of individual neurons, and neuronal circuits can provide valuable insights.

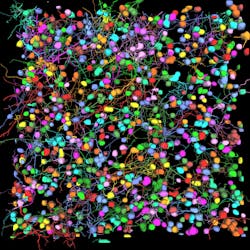

Overcoming the challenges of imaging dense neuronal networks can be greatly streamlined with AI-powered image analysis. For example, Leica estimates its Aivia 12 AI-powered image analysis improves soma detection by up to 46%, and it reduces time to result by as much as 75%. In a study presented at the 2019 joint meeting of the American Society for Cell Biology (ASCB), researchers showed that fully automated 3D neuron reconstruction can produce 395 neurons, 815 dendrites, and 325,541 µm in total path length in 48 minutes (see Fig. 5).2 The researchers estimated that a manual process would take about 12 days to produce the same data set.

Expanding microscopy’s role in R&D

AI-enabled microscopy will also improve the efficiency of drug discovery and development for neurological diseases. For example, autonomous microscopy powered by AI could be used to create phenotypes of normal brain tissue and diseased tissue from mouse models. Then, researchers could treat the mice with different drugs. If they were to see that the phenotype of the diseased animal is starting to normalize, meaning the images show that it’s becoming more like the normal phenotype, they would know the drug is making an impact and should be studied further.

Despite these advances, we have only touched the surface of AI’s full potential in imaging. With emerging tools such as large language models and generative AI, it may be possible someday to train AI algorithms that learn from microscope reference manuals. Then, whenever researchers want to run new types of experiments, they wouldn’t need to ask a technician how to do it—they could just ask the microscope. And the microscope could tell them whether the experiments they’re planning have already been done by other researchers. The microscope could also suggest research protocols that would be the best to pursue.

It sounds futuristic, but with the advances in AI that have already enhanced microscopy, it’s entirely within reach. Indeed, AI is turning the microscope from an imaging tool into a full research partner.

REFERENCES

1. M. J. Smith et al., J. Immunother. Cancer (2022); doi:10.1136/jitc-2022-sitc2022.0002.

2. See https://tinyurl.com/2p869fz8.

About the Author

Luciano Lucas

Director for Data & Analysis, Leica Microsystems

Luciano Lucas is director for data and analysis at Leica Microsystems (Lisbon, Portugal).